- Three major Chinese AI labs allegedly used 24,000 fake accounts to generate 16 million Claude interactions to extract competitor outputs at industrial scale.

- This kind of large-scale prompting is effectively model distillation: turning a rival model’s behavior into a training dataset, which the article characterizes as theft rather than mere ToS abuse.

- AI APIs create a unique exposure where every response can function as transferable intellectual property, making model providers inadvertent suppliers to competitors.

- Running an operation of this size requires coordinated infrastructure (identity/payment fraud, distributed traffic, data pipelines), indicating well-funded, organized actors.

- Detecting extraction campaigns is asymmetrical and difficult because individual calls look legitimate and common defenses (rate limits, IP blocks, payment checks) can be worked around at scale.

Three Chinese AI labs used 24,000 fraudulent accounts to run 16 million interactions with Claude. Not to build products. Not to serve customers. To extract knowledge from a competitor's model at industrial scale.

Anthropic caught them. The labs involved, DeepSeek, Moonshot AI, and MiniMax, are not obscure startups. They are well-funded organizations building their own foundation models. And they apparently decided that one shortcut to improving those models was to systematically mine a rival's AI for its outputs.

This is not a terms-of-service violation dressed up as news. This is what industrial espionage looks like when the factory floor is an API endpoint.

The mechanics of large-scale extraction

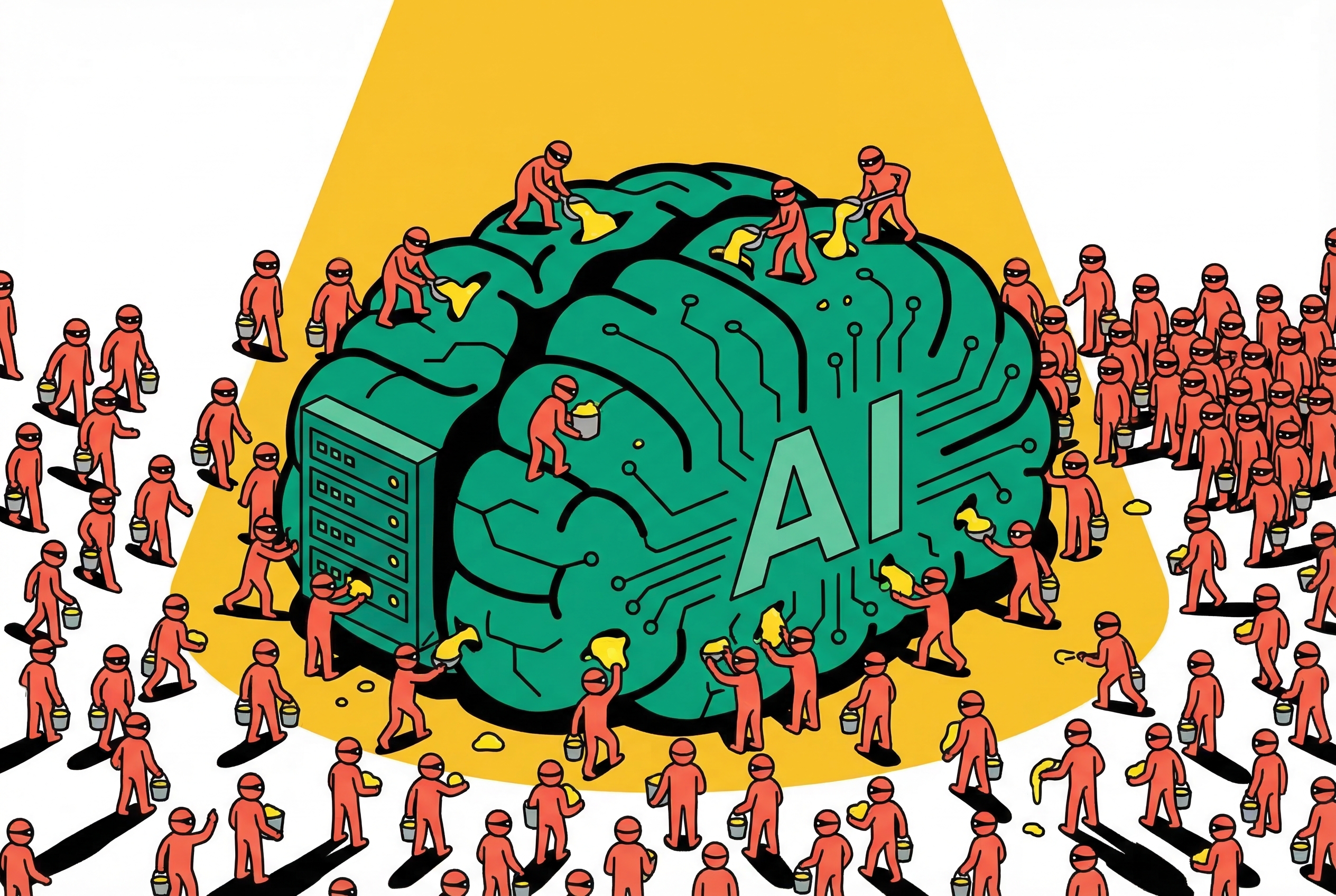

Think about what 24,000 accounts and 16 million interactions actually means in practice. That is not someone running a few experiments. That is coordinated infrastructure. You need identity generation at scale, payment methods that don't trace back to a single entity, query patterns distributed enough to avoid rate limiters, and a pipeline on the other end to collect, store, and process the outputs.

This is a supply chain operation. Someone designed it, funded it, staffed it, and ran it long enough to generate 16 million data points before getting caught. The sophistication required to maintain that many accounts without triggering automated fraud detection is itself a meaningful engineering effort.

And the purpose is straightforward. When you prompt a frontier model millions of times with carefully constructed queries, you are building a dataset of how that model reasons, what it knows, how it structures answers, where it draws boundaries. That dataset becomes training material. You are using your competitor's years of research, their RLHF tuning, their safety work, their instruction-following refinement, as a free input to your own development pipeline.

The industry term is model distillation. The accurate term is theft at scale.

Your competitor is also your attack surface

The AI industry has a structural problem that most other industries do not share. Your product is simultaneously a service, a knowledge base, and a potential training resource for anyone who can access it. Every API call returns intellectual property. Not source code, not weights, but the behavioral output of billions of dollars in research.

Traditional software companies worry about competitors reverse-engineering their products. That takes time, specialized skills, and often produces imperfect results. AI model extraction is different. You do not need to understand the architecture. You do not need access to the weights. You just need enough well-crafted prompts and enough accounts to run them, and you can build a synthetic dataset that captures a meaningful fraction of what the target model can do.

This makes every AI company an unwitting supplier to its competitors. Anthropic caught these three labs. The question nobody can answer is how many others are doing the same thing to every major model provider right now.

Detection is harder than it looks

Anthropic deserves credit for catching this. But the detection problem is genuinely difficult. A single fraudulent account making normal-looking API calls is indistinguishable from a legitimate customer. The signal only emerges at scale, when you notice that thousands of accounts share behavioral patterns, query distributions, or infrastructure fingerprints.

Rate limiting helps, but sophisticated actors distribute their traffic. IP blocking helps, but cloud infrastructure makes IP addresses disposable. Payment verification helps, but identity fraud is a mature industry. Every countermeasure has a workaround when the attacker is a well-resourced organization rather than an individual.

The detection challenge is fundamentally asymmetric. The defender needs to catch every coordinated campaign. The attacker only needs one to succeed long enough to extract useful data. And "long enough" might be days, not months. Sixteen million interactions sounds like a lot, but spread across 24,000 accounts it is roughly 667 interactions per account. That is an unremarkable usage pattern for a legitimate developer.

The geopolitical layer

It is impossible to discuss this without acknowledging the US-China dimension. DeepSeek, Moonshot AI, and MiniMax are all Chinese companies. The US has imposed export controls on advanced AI chips, restricted model access, and treated AI capability as a national security concern. China has responded by accelerating domestic AI development through every available channel.

In that context, systematic extraction from American AI platforms is not just competitive intelligence. It is a strategy for closing a capability gap that export controls are specifically designed to maintain. Whether the Chinese government directed this activity or these labs acted independently is unclear. The effect is the same.

This also creates a policy problem. If US-based AI companies are required to serve global customers through their APIs, but those APIs are being used as extraction tools by foreign competitors, the current regulatory framework has no good answer. Export controls cover chips and model weights. They do not cover the behavioral outputs of a model accessed through a standard commercial API.

The gap between what is regulated and what is exploitable is exactly where this attack lives.

What this changes for the industry

Every AI company with a public API should be rethinking three things right now.

First, identity verification. The current standard for API access is roughly equivalent to signing up for a SaaS trial. If 24,000 fake accounts can operate simultaneously, the identity layer is not built for adversarial conditions. KYC processes that financial institutions have used for decades may need to become standard for API access, at least at scale.

Second, behavioral analysis. Detecting coordinated extraction requires looking at query patterns across accounts, not just within them. What topics are being systematically explored? Which capabilities are being probed? Are thousands of accounts converging on the same knowledge domains in ways that organic usage would not produce? This is a machine learning problem in itself, using your own models to detect when someone is trying to steal your models.

Third, output throttling. Not rate limiting in the traditional sense, but limiting the information density of responses when patterns suggest extraction rather than legitimate use. This is delicate. Degrading service for legitimate customers to frustrate potential extractors is a losing trade. But selectively reducing output quality for suspicious accounts is a defense worth exploring.

The bigger question

Anthropic caught three labs. That is the story everyone will report. The story that matters more is the one we cannot report, because nobody has caught the others yet.

Every major AI platform, OpenAI, Google, Anthropic, Mistral, is a potential extraction target. The economics are compelling. Why spend hundreds of millions training a model from scratch when you can extract meaningful capability from a competitor's model for the cost of API credits and some fraudulent accounts? The return on investment for model extraction is probably the highest in the industry, because the cost of the alternative is so enormous.

The AI industry built itself on open APIs and easy access because that is how you grow a platform. That openness is now a vulnerability. Not a theoretical one. A demonstrated, quantified, 16-million-interaction vulnerability.

Anthropic's response to this will matter. But the industry's response matters more. Because right now, every AI company's API is both a product and an unlocked door. And 24,000 fake accounts just proved that someone is willing to walk through it.

If you build or rely on AI products, assume your model endpoints are also an attack surface and treat high-volume, patterned usage as a security problem, not just “abuse.” Watch for coordinated account clusters, unusual prompt distributions, and infrastructure fingerprints, and invest in layered defenses that combine fraud detection, behavioral analytics, and tighter access controls for high-value capabilities. If you’re a buyer of AI services, ask providers how they detect and respond to model-extraction attempts, because the integrity and uniqueness of the model you’re paying for can be eroded by competitors copying its behavior.