- More than half of department-level AI initiatives run without formal approval or oversight, creating a widespread governance vacuum rather than a technology problem.

- AI adoption is moving faster than risk management: 78% of leaders say their organizations can’t keep up with the associated controls and policies.

- Many companies lack basic visibility into AI usage, with 45.6% not knowing their workforce adoption rate or what tools and data are being used.

- Department-by-department tool choices lead to “Fractured AI,” multiplying privacy, security, retention, and audit challenges while increasing long-term integration and compliance debt.

- Even where governance efforts exist, 61% of organizations say their data assets aren’t ready for generative AI due to silos, poor structure, and weak labeling.

Somewhere in your organization right now, a department is using an AI tool that nobody in leadership approved, evaluated, or even knows about. The marketing team signed up for one platform. Sales adopted another. Finance built something internal. Legal is still drafting the policy that was supposed to prevent all of this.

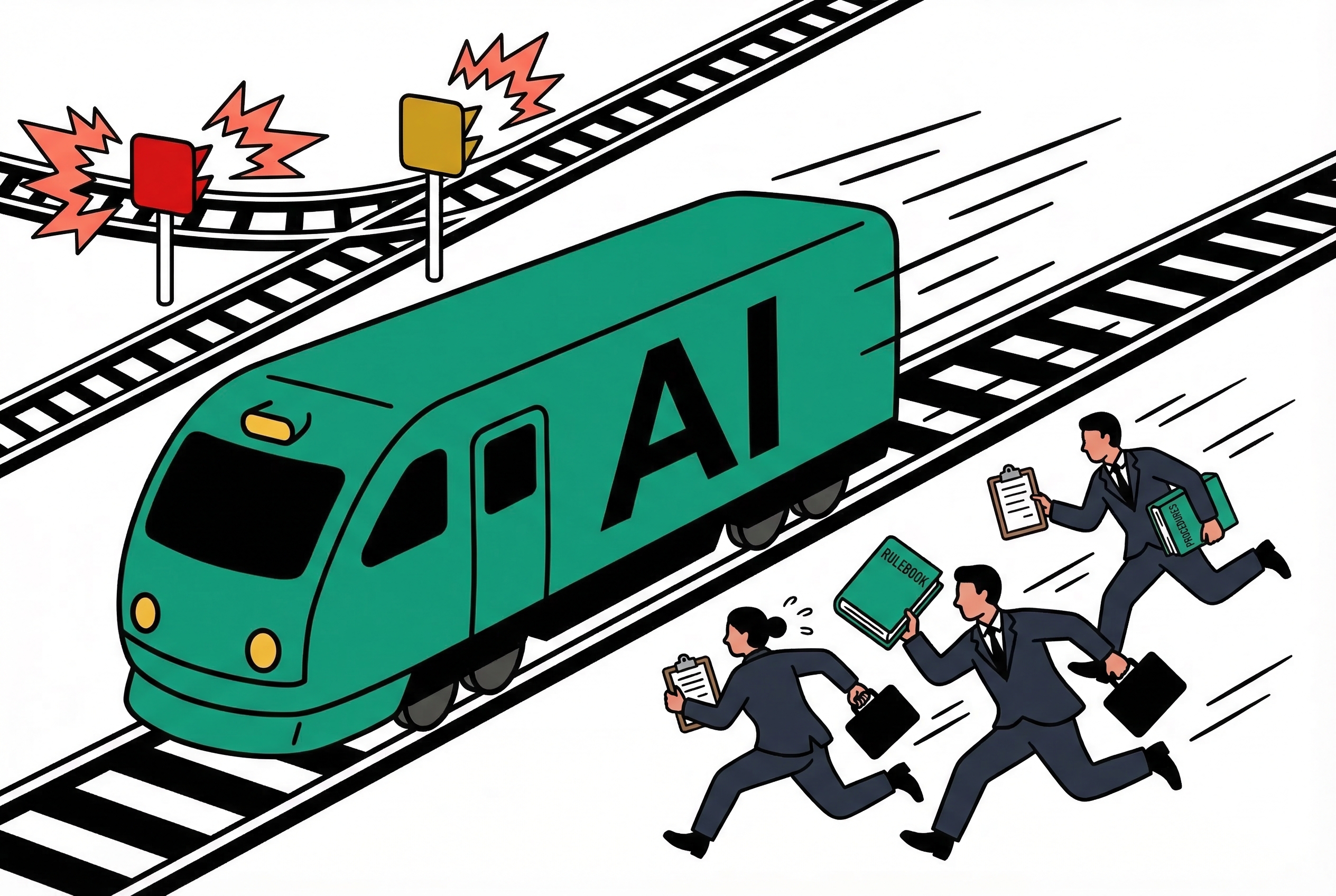

This isn't a hypothetical. According to recent data, 52% of department-level AI initiatives are operating without formal approval or oversight. More than half of all AI activity in the average enterprise is ungoverned. Not ungovernable. Just ungoverned. Because nobody built the structure to manage it before the adoption wave hit.

The speed gap is the actual crisis

The numbers tell a clear story. 78% of leaders say AI adoption is outpacing their organization's ability to manage the associated risks. That's not a minority concern. That's nearly four out of five executives admitting, on the record, that their companies are moving faster than their ability to stay in control.

This isn't a technology failure. The technology works. Models are more capable than they were a year ago. The tooling has matured. The APIs are stable. What hasn't matured is everything around the technology: the policies, the oversight structures, the risk frameworks, the basic organizational awareness of what's actually deployed.

An EY survey captured the same dynamic from a different angle: autonomous AI adoption is surging across enterprises while oversight mechanisms fall further behind with each quarter. The gap isn't closing. It's widening.

Nobody knows what's running

Here's the number that should concern every executive reading this: 45.6% of organizations don't even know their workforce AI adoption rate. Not "don't have precise figures." Don't know. At all.

Think about what that means in practice. You're a CTO. You're responsible for data security, regulatory compliance, and operational risk. And you cannot answer the basic question of how many people in your organization are using AI tools, which tools they're using, or what data they're feeding into them.

This is the equivalent of running a bank where half the tellers have installed their own accounting software and nobody in risk management has a list. It would be unthinkable in any other domain. In AI, it's the norm.

Fractured AI is the long-term threat

When every department picks its own AI solution independently, you get what analysts are calling "Fractured AI." Marketing uses one vendor's models. Engineering uses another. Customer support built something custom. HR is evaluating three options simultaneously.

Each of these decisions might be individually reasonable. The marketing team picked the tool that best understands brand voice. Engineering chose the one with the best code generation. Support went with whatever integrated into their ticket system.

But zoom out, and the picture is a mess. Data flows into five different systems with five different privacy policies, five different retention rules, and five different security postures. Outputs from one system can't be validated against another. There's no unified audit trail. When a regulator asks "how does your organization use AI?", the honest answer is "we don't actually know, because there are at least a dozen answers depending on which floor you're standing on."

The long-term cost of this fragmentation will dwarf whatever efficiency each department gained by moving fast. Integration debt, compliance exposure, and the sheer operational overhead of managing a dozen disconnected AI implementations will compound over time.

The data foundation isn't there either

Even organizations that are trying to govern their AI adoption are running into a more fundamental problem. 61% of companies admit their data assets are not ready for generative AI. The information that would make AI genuinely useful, proprietary data, internal knowledge, customer histories, is either unstructured, siloed, poorly labeled, or all three.

This explains a pattern I see constantly. Nearly two-thirds of organizations remain stuck in the pilot stage of AI adoption. They run a proof of concept, it shows promise, and then scaling it requires clean, accessible, well-governed data that doesn't exist. The pilot works on curated demo data. Production requires the real thing, and the real thing is a mess.

70% of organizations find it hard to scale AI projects that rely on proprietary data. Not "find it challenging." Find it hard. As in, they've tried and hit walls they don't know how to get past. And these are the companies that got further than the pilot stage in the first place.

This is a management crisis, not a technology crisis

The standard narrative frames AI adoption challenges as technical problems. The models hallucinate. The integrations are complex. The infrastructure isn't ready. Those are real issues, but they're solvable engineering problems with known approaches.

The governance gap is different. It's a management problem, an organizational design problem, a leadership problem. And it's harder to solve because the people who need to solve it, executives, legal teams, compliance officers, are often the ones with the least hands-on understanding of what AI tools actually do and how they're being used day to day.

Companies aren't failing at AI because the technology doesn't work. They're failing because they're adopting faster than they can govern. The real risk isn't that AI makes mistakes. Every technology makes mistakes. The real risk is that nobody knows which AI is making which decisions in which department, and nobody has the visibility to find out.

What governance that works actually looks like

The organizations I see handling this well share a few common traits, and none of them involve slowing down AI adoption to a crawl.

They maintain an inventory. Not a perfect one, but a living document that tracks which AI tools are in use, in which departments, processing what types of data. This sounds basic. Most companies don't have it.

They set boundaries, not bans. Rather than prohibiting AI use outright and watching people route around the prohibition, they define categories. These data types can go into external models. These can't. These use cases need review. These are pre-approved. This gives teams room to move while keeping sensitive operations under control.

They assign ownership. Someone, a real person with actual authority, is responsible for AI governance. Not a committee that meets quarterly. Not a shared responsibility that belongs to everyone and therefore no one. A named individual who can make decisions, escalate issues, and be held accountable.

They audit regularly. Not annually. Quarterly at minimum. What tools are in use today that weren't last quarter? What data is flowing where? What changed? The AI landscape inside a company shifts faster than almost any other technology layer. Governance has to keep pace or it's fiction.

The window is closing

There's a finite period where AI governance gaps are embarrassing but manageable. You can still get your arms around the problem. You can still build the inventory, set the policies, assign the ownership.

That window closes when a data breach traces back to an unapproved AI tool that was processing customer information nobody authorized it to touch. It closes when a regulator asks for your AI use documentation and you have to explain that 52% of your AI activity happened outside formal channels. It closes when two departments discover their AI systems have been making contradictory decisions based on different data sets, and nobody noticed for six months.

The companies that will navigate AI successfully aren't necessarily the ones adopting fastest. They're the ones that figured out, early enough, that adoption without governance isn't innovation. It's just organized chaos with a longer blast radius.

Start by getting visibility: inventory which AI tools are in use, who’s using them, and what data is being shared, then treat that as a living register rather than a one-time audit. Put lightweight governance in place quickly—clear approval paths, minimum security and retention standards, and an audit trail—so adoption can continue without turning into unmanaged risk. At the same time, invest in data readiness (classification, access controls, and cleaning key knowledge sources) so AI value comes from trusted internal data instead of fragmented, ad hoc usage.