- Enterprise AI agent pilots often fail between demo and production, with organizations averaging 3.7 failed attempts before a successful deployment.

- Despite rapid adoption forecasts (40% of apps embedding agentic AI by 2028), a similar share of projects (40%) will be cancelled or deprioritized by 2027, concentrating wins among a few leaders.

- Real deployment costs are high—about $340K to $780K per agent with 16–28 weeks to production—making repeated failures easily exceed $1.2M in sunk cost.

- The most common blockers are poor data quality (67%), integration complexity, and weak governance, with only 23% of enterprises having formal agent governance frameworks.

- Successful deployments tend to be narrowly scoped, built on existing data pipelines, and defined upfront with clear success metrics and a “kill switch” to prevent drift.

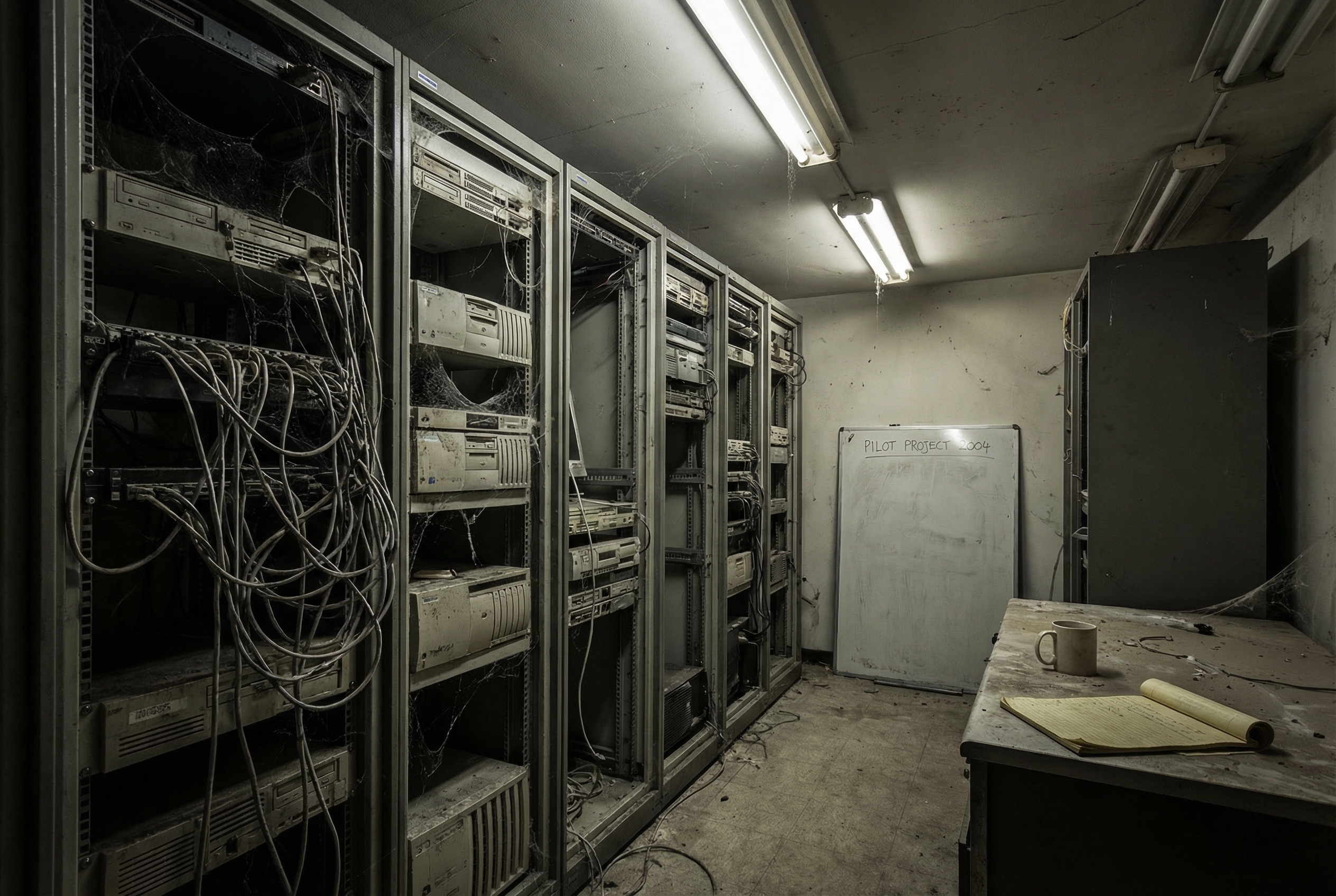

The Enterprise AI Agent Graveyard Is Real, and It's Expensive

Every enterprise technology leader I talk to right now has the same story. They ran an AI agent pilot. It looked promising. Then it died somewhere between the demo and production.

Gartner predicts 40% of enterprise applications will have agentic AI embedded by 2028, up from roughly 5% today. That sounds like inevitable momentum. But the same Gartner research includes a less flattering number: 40% of agentic AI projects will be cancelled or deprioritized by 2027. These two stats aren't contradictory. They describe a market where the winners pull further ahead while everyone else burns through budget learning the same painful lessons.

The average enterprise is now on its 3.7th failed agent pilot before landing a successful production deployment. That's not a typo. Nearly four expensive attempts before something sticks.

The $340K Learning Curve

The cost reality of agent deployment is something vendors conveniently leave out of their pitch decks. A typical enterprise agent deployment runs $340K to $780K when you account for infrastructure, integration work, and ongoing maintenance. The timeline from proof-of-concept to production averages 16 to 28 weeks, and that's for the ones that actually make it.

Most don't make it. The reasons are predictable but apparently hard to internalize. Data quality tops the list, cited by 67% of enterprises as a primary blocker. Integration complexity comes next. Then there's the governance gap, only 23% of enterprises have formal AI agent governance frameworks in place. They're deploying autonomous systems without clear rules about what those systems can and cannot do.

The math on failed pilots is brutal. If an enterprise averages 3.7 failures at even the low end of the cost range, that's over $1.2 million in sunk costs before a single agent reaches production. The ROI timeline for successful deployments runs 8 to 14 months to break even. Add the failed attempts and some organizations are looking at two to three years before they see net positive returns on their agent investments.

What the Graveyard Projects Have in Common

The pattern of failure is remarkably consistent. Failed agent projects tend to share three characteristics.

They start too broad. The initial scope covers multiple departments, several data sources, and a vague mandate to "automate workflows." The team spends months on architecture and never ships anything a user touches.

They skip the data work. Agent performance is directly tied to the quality and accessibility of the data it operates on. Most enterprises have fragmented data across dozens of systems with inconsistent schemas, incomplete records, and no clear ownership. Building an agent on top of that is building on sand.

They lack a kill switch. Not literally, though that matters too. They lack clear success metrics defined before launch. Without a concrete definition of what "working" looks like, projects drift, scope expands, and eventually someone with budget authority asks what they're getting for their money. Nobody has a good answer.

The Deployments That Actually Work

The enterprises getting agents into production share a different set of patterns. JP Morgan's agent for trade settlement. Mayo Clinic's diagnostic triage agent. Toyota's supply chain optimization agent. These aren't moonshot projects. They're narrow, well-scoped applications built on existing infrastructure.

Narrow scope from day one. Successful deployments pick one process, one workflow, one decision point. Toyota didn't try to optimize their entire supply chain with an agent. They targeted specific bottlenecks where the data was clean, the process was well-understood, and the potential savings were quantifiable before writing a line of code.

Existing data pipelines. Every successful deployment I've seen builds on data infrastructure that was already working. Mayo Clinic's triage agent runs on clinical data systems that were mature and well-maintained long before anyone mentioned AI agents. The agent layer is the last mile, not the entire journey.

Executive sponsorship with patience. This is the unglamorous one. Successful deployments have a senior sponsor who understands the 8 to 14 month ROI timeline and has the organizational standing to protect the project from quarterly budget reviews. Without that air cover, promising projects get killed before they can prove their value.

Clear success metrics defined upfront. Not "improve efficiency" or "reduce costs." Specific numbers. JP Morgan defined their trade settlement agent's success criteria in terms of processing time reduction and error rate before the project started. When the results came in, the conversation was about measured outcomes, not subjective impressions.

The Governance Problem Nobody Wants to Talk About

Only 23% of enterprises have formal governance frameworks for AI agents. That means 77% are deploying autonomous systems, systems that make decisions and take actions, without established rules about boundaries, escalation, auditing, or accountability.

This isn't a theoretical concern. An agent that processes financial transactions, triages medical cases, or manages supply chain orders is making consequential decisions. When it makes a wrong one, who's responsible? What's the audit trail? How do you explain the decision to a regulator?

The enterprises that get governance right treat it as a prerequisite, not a follow-up. They define what the agent can and cannot do before deployment. They build monitoring into the system architecture rather than bolting it on later. They establish human review triggers for decisions above certain thresholds.

Financial services leads in agent deployment partly because they already have compliance infrastructure. The regulatory frameworks that make banking feel slow and bureaucratic turn out to be exactly the kind of structure that makes agent deployment manageable. Healthcare is similar. Manufacturing has quality control processes that translate well to agent governance.

Education, government, and small business lag behind. Not because the technology is less applicable, but because the governance infrastructure doesn't exist yet and building it from scratch is expensive.

The Two-Speed Market

What's emerging is a two-speed market. Organizations with mature data infrastructure, established governance practices, and patient executive sponsors are deploying agents successfully and compounding their advantage. Organizations without those foundations are cycling through expensive pilots and falling further behind.

The gap isn't about technology access. Everyone can get an API key. The gap is about organizational readiness, the boring, expensive, unglamorous work of cleaning data, building governance frameworks, and establishing clear success criteria before chasing the next demo.

If you're planning an agent deployment in 2026, the uncomfortable truth is that your success probability has very little to do with which AI model you choose or which platform you build on. It has almost everything to do with whether your data is clean, your scope is narrow, your metrics are defined, and your leadership is willing to wait a year for returns.

The enterprises filling the agent graveyard aren't less ambitious than the ones succeeding. They're less patient, less disciplined about scope, and less honest about the state of their data. That's fixable. But it requires admitting that the bottleneck was never the AI.

Marco Kotrotsos writes about practical AI implementation at gloss.run and acdigest.substack.com.

If you’re considering an enterprise agent, start with one tightly defined workflow where the data is already reliable and the value can be measured, rather than a broad “automate everything” mandate. Invest early in data readiness and integration planning, and put governance in place before the agent touches production systems. Define success metrics and an explicit stop/go decision point upfront so the project can be scaled confidently—or shut down quickly—before costs compound.