- AI “vibe coding” makes it easy for non-engineers to build working demos, but it doesn’t provide the judgment needed to make software production-ready.

- The biggest risk isn’t who writes the code; it’s organizations treating a successful demo as a sufficient standard for shipping.

- Prototypes are valuable as communication (“something like this”), but skipping an engineering handoff turns them into fragile products full of unhandled edge cases, security gaps, and reliability issues.

- “Task expansion” means engineering teams often inherit extra work: auditing, rewriting, and hardening AI-generated prototypes whose design expectations are already locked in.

- Shipping prototype code to production doesn’t save time—it defers it, and the cleanup cost compounds quickly once real users and real conditions hit.

A product manager at a mid-size fintech told me last month that she'd built a working prototype of an internal pricing tool using Claude Code. Took her an afternoon. Her engineering team was impressed. Her VP was thrilled. The prototype got demo'd at the all-hands. Two weeks later, it was running in production.

Nobody reviewed the code. Nobody asked whether it handled concurrent users. Nobody checked what happened when someone entered a negative number in the discount field. It worked in the demo, so it shipped.

This is the story playing out at thousands of companies right now, and the risk isn't where most people think it is.

The real problem isn't who's building

Vibe coding democratized building. That's genuinely good. Product managers, designers, domain experts, people who understand problems deeply but never learned Python, can now translate their ideas into working software. That's a net positive for the industry.

What vibe coding didn't democratize is judgment. The judgment to know that a working demo and production-ready software are separated by a canyon of edge cases, security concerns, error handling, and architectural decisions that a prototype never had to face. The ability to make something work is not the same as the ability to make something that keeps working.

The danger isn't that non-developers are coding. The danger is that organizations are treating "it works on my laptop" as a shipping standard.

The handoff problem

Intuit's CTO described something interesting in a recent Bloomberg piece. Product managers on the QuickBooks team are now building prototypes with Claude. He sees this as a positive, and he's right. "At least now, the product manager can come to the engineer and say, 'I want something like this.'" A working prototype is a more precise specification than any product requirements document could ever be.

But there's a critical word in that sentence: "something like this." The prototype is a communication tool. It shows intent. It demonstrates the interaction model. It is not the production implementation, and treating it as one creates a specific, predictable failure mode.

The PM demo works because it was tested by one person on one machine with expected inputs. Production code works because someone thought about what happens when 500 people hit it simultaneously, when the database connection drops mid-transaction, when a user pastes 50,000 characters into a field designed for 200. Those aren't edge cases. They're Tuesday.

When organizations skip the handoff, when the prototype becomes the product without an engineering review pass, they're not saving time. They're borrowing it. And the interest rate on that loan is brutal.

Task expansion is already here

A Berkeley study on AI coding agents identified a pattern they call "task expansion." When non-technical colleagues start building prototypes with AI, engineering teams don't get freed up. They get a new category of work: cleaning up vibe-coded output that was never designed for production.

The prototypes arrive with implicit expectations. The PM already demo'd it to stakeholders. The design is locked. The workflow is set. Now the engineer's job is to make this specific implementation production-ready, rather than building the right implementation from scratch. It's like being handed a house built without permits and being told to bring it up to code without changing the floor plan.

This isn't a hypothetical. I'm watching it happen at companies right now. Engineers are spending an increasing percentage of their time not building new features, but auditing and rebuilding code that someone else generated with an AI agent. The generation took an afternoon. The cleanup takes weeks.

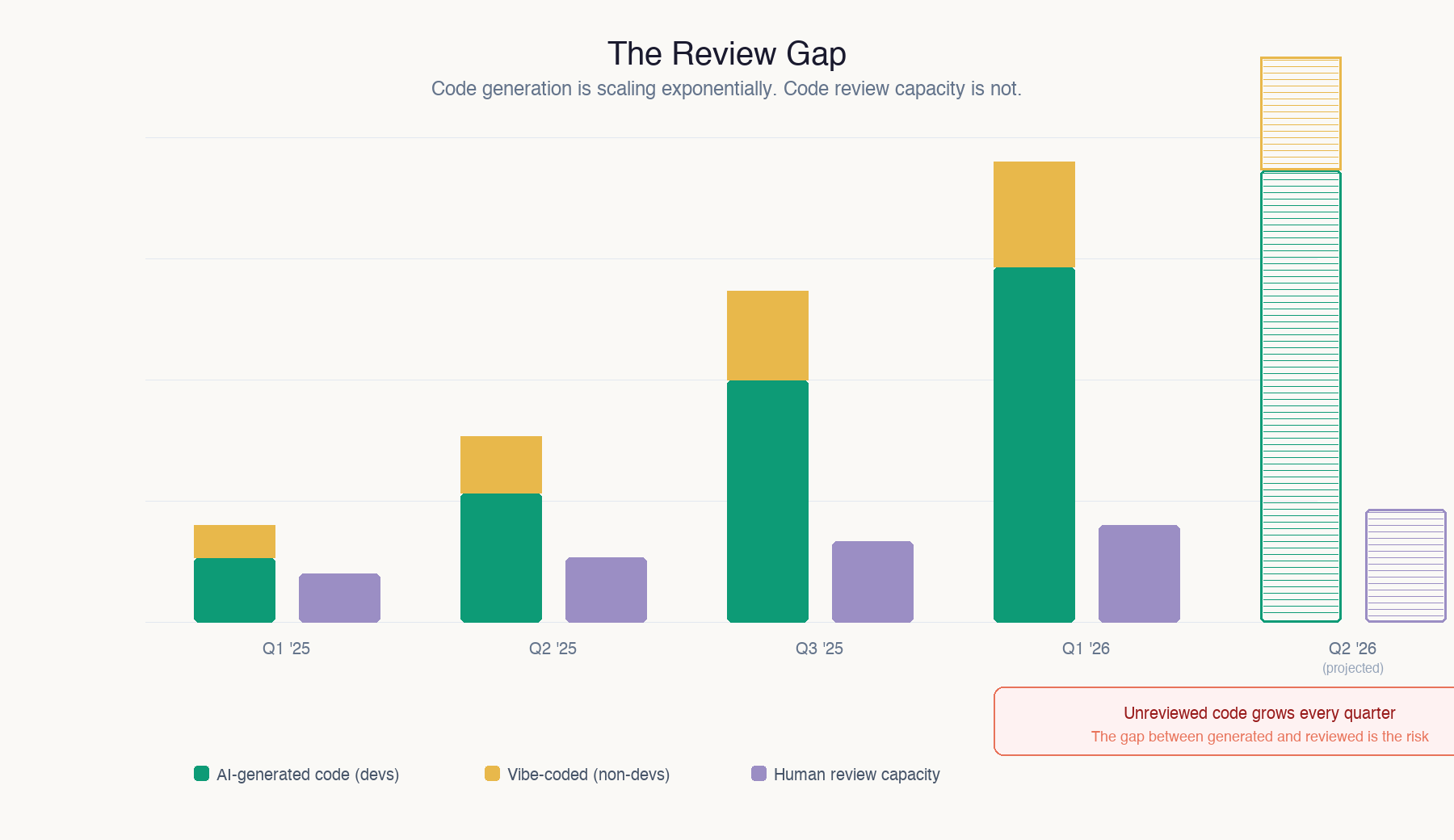

The review gap

Here's the math that should worry every engineering leader.

Code output is growing exponentially. When a PM can generate a working prototype in four hours, and a designer can build their own frontend in an afternoon, and the CEO is running three concurrent Claude Code sessions until midnight, the volume of code entering an organization's codebase is accelerating faster than at any point in software history.

Review capacity is not growing at all. You have the same number of senior engineers who understand your architecture, your security requirements, your scaling characteristics, and your technical debt. Those people are now expected to review five times the volume of code, much of it written by people who don't know what a code review is looking for.

Something has to give. Either review standards drop (they will, quietly), or review becomes a bottleneck that slows everything down (it will, loudly), or code ships without review (it already is, silently). None of those outcomes are good.

Maintenance debt nobody can service

There's a secondary problem that hasn't fully materialized yet, but will. Code that nobody on your team wrote is code that nobody on your team understands. When a PM generates a prototype and it ships to production, who maintains it?

The PM doesn't know how to debug it when something breaks at 2 a.m. The engineer who inherits it is reading AI-generated code they had no hand in designing, with no documentation, no tests, and no architectural context for why decisions were made. They're reverse-engineering a stranger's thought process, except the stranger was an LLM that doesn't remember what it was thinking.

I worked with a company last quarter that had fourteen internal tools built by various non-engineering employees using AI coding agents. Useful tools. Solving real problems. Zero tests. Zero documentation. No error monitoring. When one of them broke, it took the engineering team three days to understand the codebase well enough to fix a bug that would have taken the original builder thirty minutes to describe to an agent.

Multiply that by every vibe-coded prototype that quietly becomes load-bearing infrastructure, and you have a maintenance crisis that compounds every month.

This is a guardrails problem, not a gatekeeping problem

I want to be clear about something: the answer is not to stop non-developers from coding. That genie is out of the bottle, and honestly, it should be. The best product ideas often come from the people closest to the problem, and giving those people the ability to build is one of the most valuable things AI has done.

The answer is to build new organizational guardrails for a world where anyone can generate code but not everyone can evaluate it.

That means mandatory review before anything touches production, regardless of who wrote it. It means treating AI-generated prototypes as specifications, not as implementations. It means investing in automated testing, security scanning, and monitoring tools that catch the problems that a demo environment never surfaces. It means defining clearly where a prototype ends and where engineering begins.

Some companies are already doing this well. They've created "prototype to production" pipelines that let anyone build and demo, but require an engineering signoff before deployment. The PM still gets to build their prototype in an afternoon. The prototype still informs the final product. But there's a gate between "this works" and "this ships."

The urgency

The window for getting this right is narrow. Every month, more code ships without review. Every month, more prototypes quietly become production systems. Every month, the gap between code generation capacity and code evaluation capacity widens.

The organizations that build guardrails now will get the benefit of democratized building without the downside of ungoverned shipping. The ones that don't will spend the next two years untangling a codebase full of AI-generated code that nobody understands, nobody tested, and nobody is equipped to maintain.

Everyone should build. Not everyone should ship. The distinction matters more right now than it ever has.

If you’re using AI to prototype, treat the output as a spec and a conversation starter, not the implementation you ship. Put a clear handoff step in your process where engineers review for security, error handling, concurrency, data validation, and observability before anything reaches production. If you lead a team, set explicit “definition of done” standards so “it worked in the demo” never becomes the release gate, and budget time to rebuild prototypes properly rather than patching them under deadline pressure.