- The biggest enterprise cost of AI hallucinations isn’t wrong answers but the ongoing “verification tax” required to trust any output.

- Enterprises lost an estimated $67.4B in 2024, with employees spending about 4.3 hours per week verifying AI results—around $14,200 per employee annually.

- Hallucinations are hard to detect because they look like normal, confident outputs, making up 82% of AI-related enterprise bugs and forcing blanket checking.

- Hallucination rates are high enough to make verification non-optional (about 9.2% for general queries and 33–48% for person-specific facts).

- Nearly half of enterprise AI users report making major decisions based on hallucinated content, driving widespread adoption of mitigation protocols and human-in-the-loop workflows that erode ROI.

The $67.4 Billion Tax on Trusting AI

You probably think the biggest risk of AI hallucinations is getting a wrong answer. It isn't. The biggest cost is what happens to every right answer: someone has to check it.

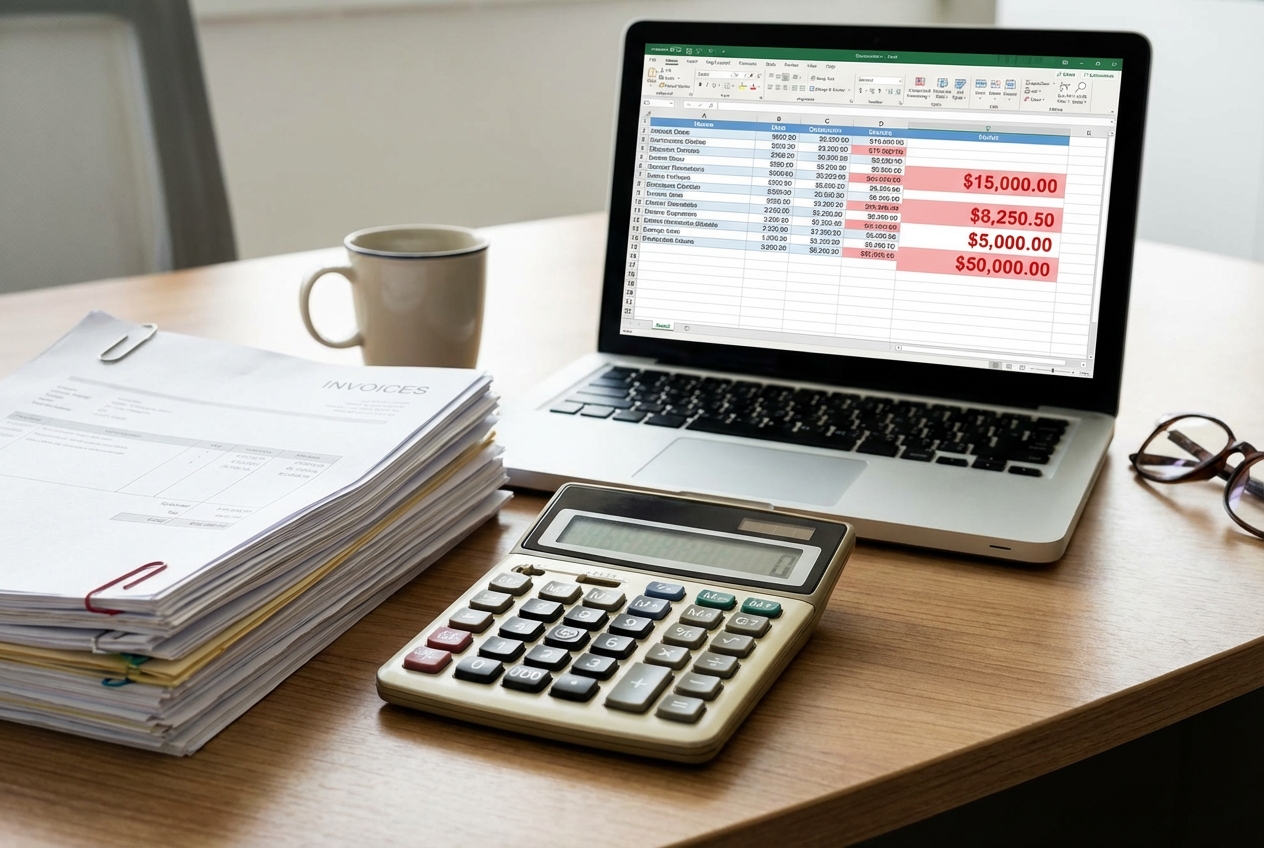

Enterprises lost $67.4 billion to AI hallucinations in 2024. That number is staggering on its own, but it masks something worse. The visible losses, the deals based on fabricated data, the reports citing papers that don't exist, those are the tip. Underneath sits a much larger, quieter drain: the verification tax.

Employees now spend an average of 4.3 hours per week verifying AI-generated output. That's not a rounding error. That's $14,200 per employee per year in lost productivity. For a 500-person company, the annual bill comes to $7.1 million, and most finance teams don't even have a line item for it.

The Bug That Doesn't Look Like a Bug

When traditional software fails, it crashes. You get an error message, a stack trace, a red screen. The failure is visible and immediate. AI hallucinations are the opposite. They arrive dressed in the same confident prose as correct answers, formatted identically, delivered with the same speed.

This is why 82% of AI-related bugs in enterprise settings are hallucinations, not crashes, not timeouts, not permission errors. The system works perfectly. It just lies.

That distinction matters because it changes the entire cost structure. A crash costs you the time to fix it. A hallucination costs you the time to verify everything, including the outputs that are correct. You can't selectively check only the wrong answers because you don't know which ones are wrong until you've checked them all.

The Verification Loop Nobody Budgeted For

Think about what 4.3 hours per week actually looks like. A marketing manager gets a competitive analysis from an AI tool. She spends 40 minutes cross-referencing the claims against actual sources. A financial analyst uses AI to summarize earnings calls. He spends an hour verifying every quoted figure against the original transcripts. A legal team reviews AI-drafted contract language. They spend two hours checking that cited precedents actually exist.

None of this shows up in any AI ROI calculation. The vendor pitch deck showed time savings. Nobody modeled the verification overhead that would eat into those savings.

The numbers on hallucination rates explain why verification has become non-negotiable. General knowledge queries hallucinate at a rate of 9.2%. That means roughly one in eleven responses contains fabricated information. For person-specific questions, biographical details, career histories, published works, the rate climbs to 33-48%. Nearly half. You wouldn't trust a colleague who was wrong about people's backgrounds half the time, but that's what these systems deliver.

The Decision Problem

The part that should concern every executive: 47% of enterprise AI users report having made a major business decision based on hallucinated content. Not a minor formatting choice. A major business decision.

That could be a product launch based on fabricated market data. A hiring decision informed by a candidate summary that mixed up two people's credentials. A legal strategy built on precedents that sound right but don't exist. These aren't hypothetical scenarios. They're happening in companies right now, and most of them never get traced back to the hallucination that caused them.

The response from enterprise has been predictable and expensive. 91% of enterprises are now implementing hallucination mitigation protocols. 76% are running human-in-the-loop processes specifically designed to catch hallucinations. These aren't lightweight interventions. They're entire workflows, staffed by real people, burning real hours, layered on top of systems that were supposed to reduce the need for human oversight.

The Math That Kills AI ROI

Run the actual numbers on a mid-size company. You deploy an AI assistant across your organization. It saves each employee, optimistically, 5 hours per week on drafting, research, and summarization. But each employee now spends 4.3 hours per week verifying the output. Your net productivity gain is 0.7 hours per week per person. That's 42 minutes.

Now factor in the cost of the AI tools themselves, the mitigation protocols, the training on prompt engineering and output verification, the occasional catastrophic decision made on hallucinated data. The ROI case starts looking very different from the one that got the project approved.

This doesn't mean AI is worthless. It means the current generation of general-purpose AI tools carries a hidden operational cost that most organizations haven't priced in. The companies getting real value are the ones who figured this out early and designed their workflows around it, constraining AI to domains where hallucination rates are lowest, building verification into the process rather than bolting it on after, and being honest about where AI output can be trusted without checking and where it absolutely cannot.

What Actually Works

The organizations doing this well share a few patterns. They don't treat AI as a replacement for expertise. They treat it as a first draft that needs professional review. They've mapped their hallucination risk by domain, knowing that AI is more reliable for code generation than for factual claims about people and events. They've built verification workflows that are efficient rather than exhaustive, sampling output rather than checking every line.

Most importantly, they've stopped pretending the verification cost doesn't exist. They budget for it. They staff for it. They measure it. And they make AI deployment decisions with full awareness of the total cost, not just the licensing fee.

The $67.4 billion in hallucination losses will grow as AI adoption scales. But the verification tax, the trillions in cumulative hours spent checking AI's work, will grow faster. That's the number worth watching. Not because AI is failing, but because the cost of its success is higher than anyone quoted you.

Marco Kotrotsos writes about practical AI implementation at gloss.run and acdigest.substack.com.

Treat AI output like a draft that must earn trust, not an answer you can paste into decisions. Build verification time and ownership into your rollout plans (who checks what, against which sources) and reserve AI for tasks where errors are low-impact or easily auditable. If you’re using AI for people-specific facts, financial figures, or legal claims, assume higher hallucination risk and require citations you can actually open and confirm before acting.