- Enterprises are shifting from frontier models to small language models (1–7B parameters) because they’re cheaper and easier to deploy at scale on owned hardware.

- The “Sovereign Edge” trend reflects demand for AI that runs locally for control, privacy, compliance, and reliability—without sending sensitive data to cloud APIs.

- Most real enterprise workloads (classification, extraction, summarization, translation, form processing, sentiment) don’t require frontier-level reasoning and can be handled by well-tuned SLMs with comparable practical accuracy.

- Running models on-device delivers major advantages: privacy by architecture, much lower latency (edge inference vs cloud round-trips), and cost structures that scale via one-time hardware instead of per-call fees.

- The real trade-off is theoretical maximum capability versus practical deployed capability—an SLM running everywhere can outperform a powerful model that’s too expensive or slow to use broadly.

Small Language Models Are the Real AI Deployment Story of 2026

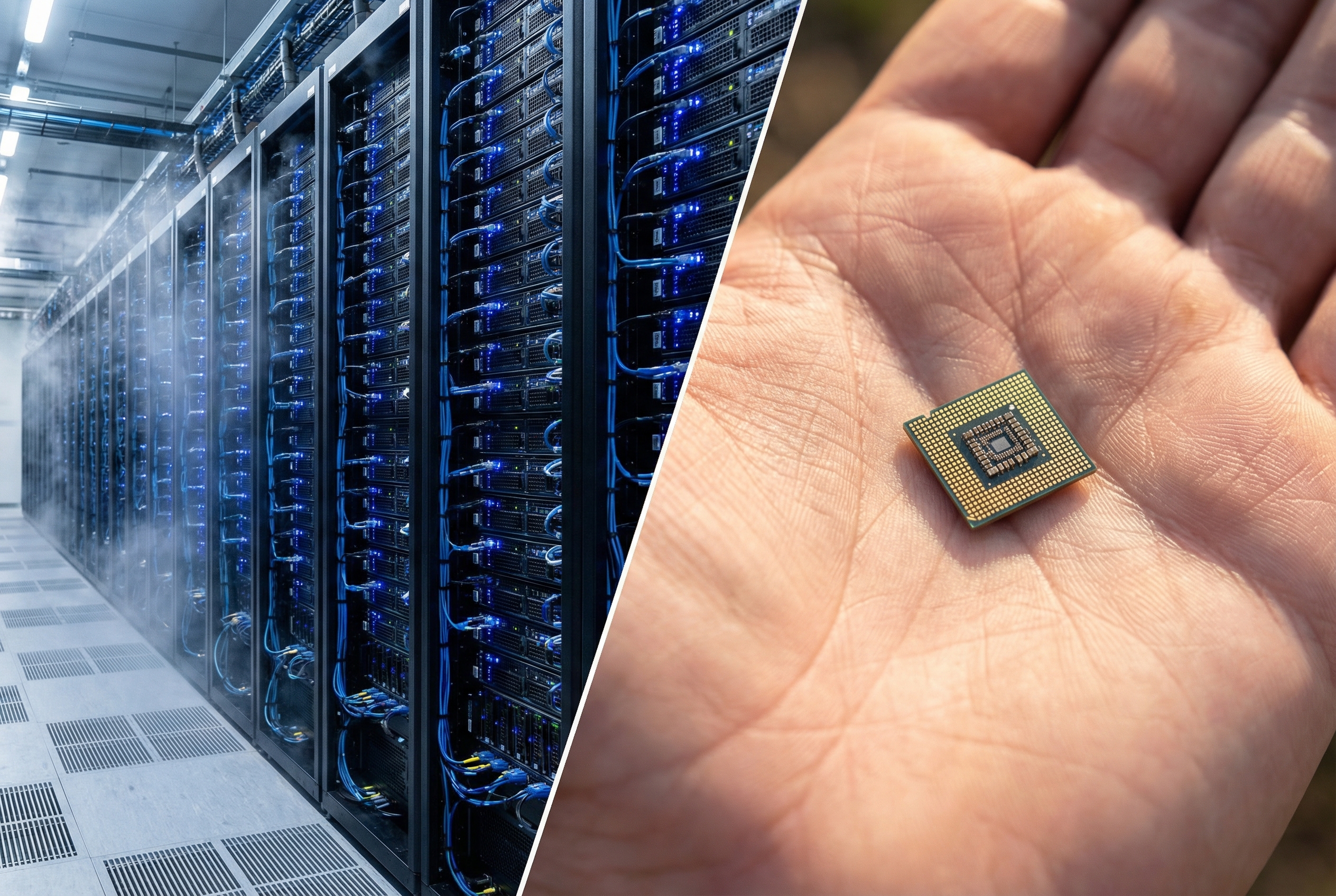

The biggest shift in enterprise AI this year isn't a new frontier model. It's the opposite: organizations are discovering that smaller, cheaper models running on their own hardware solve most of the problems they actually have.

Gartner predicts organizations will use small language models three times more than large language models by 2027. The SLM market is projected to grow from $7.7 billion to $20.7 billion by 2030. Those aren't speculative numbers from a startup pitch deck. That's the enterprise finally doing the math on what AI deployment actually costs at scale.

The Sovereign Edge

There's a term gaining traction in boardrooms: "Sovereign Edge." It describes a straightforward idea: companies want AI that runs on their own hardware, in their own data centers, under their own control.

This isn't paranoia. It's operational reality.

A hospital processing patient intake forms doesn't want that data traveling to a cloud API and back. A manufacturer running quality inspection on a production line can't afford 200 milliseconds of network latency when parts move at speed. A law firm classifying privileged documents has regulatory obligations that make cloud processing a compliance headache.

Small language models, models in the 1 to 7 billion parameter range, make sovereign edge deployable. Microsoft's Phi-4, Google's Gemma 3, Meta's Llama 3.2, Mistral Small: these models run on hardware you can hold in your hand. A $50 edge device. A tablet. A phone. No data center required.

What You Actually Give Up (And What You Don't)

The honest version: SLMs are not frontier models. You're not going to run complex multi-step reasoning chains on a 3-billion-parameter model sitting on a Raspberry Pi. If you need GPT-4-class capability, you need GPT-4-class infrastructure.

But here's what most people miss when they think about enterprise AI workloads: the vast majority of them don't need frontier-level reasoning.

Classification. Extraction. Summarization. Translation. Form processing. Sentiment analysis. These tasks represent 70 to 80 percent of what organizations actually deploy AI for. And a well-fine-tuned small model handles them with accuracy that's indistinguishable from a model fifty times its size, at a fraction of the cost, with zero network dependency.

The trade-off isn't capability versus cost. It's theoretical maximum capability versus practical deployed capability. A frontier model that's too expensive to deploy at every point of need is less capable, in practice, than a small model that's running everywhere.

Three Advantages That Actually Matter

Privacy by architecture. When a model runs on the device, data never leaves the device. There's no API call to intercept, no cloud storage to breach, no third-party processor to audit. For healthcare, legal, financial services, and government, this isn't a nice-to-have. It's the difference between "we can deploy AI" and "legal says no."

Latency that enables new use cases. Cloud API round-trips take 200 to 800 milliseconds on a good day. Edge inference takes 10 to 50 milliseconds. That gap doesn't just make existing use cases faster, it makes new ones possible. Real-time quality inspection on a moving production line. Instant translation in a patient-facing kiosk. Document classification that happens as pages scan, not minutes later.

Cost curves that actually scale. An API call costs fractions of a cent. Multiply that by every employee, every transaction, every document, every day, and you're looking at six-figure monthly bills for large deployments. An edge device is a one-time hardware cost. The model runs for free after that. Finance teams understand this math immediately.

Where SLMs Are Already Working

The deployments happening right now aren't experimental. They're production systems handling real volume.

Healthcare: Patient intake on tablets. A fine-tuned SLM reads handwritten forms, extracts structured data, flags inconsistencies, and routes to the right department. Runs on a $200 tablet, processes a form in under two seconds, and the patient's data never touches the internet.

Manufacturing: Quality inspection cameras on factory floors. A small vision-language model identifies defects in real-time as products move down the line. The latency budget is tight, sometimes under 100 milliseconds, and these models hit it consistently because there's no network hop.

Retail: Inventory counting and shelf compliance. Store associates point a device at a shelf, and an SLM identifies products, counts stock, and flags misplacements. Works offline, which matters in warehouses and stockrooms where connectivity is unreliable.

Legal: Document classification at intake. Law firms process thousands of documents per case. An SLM running on local infrastructure classifies document types, identifies privileged material, and routes for review. The data sensitivity makes cloud processing a non-starter for most firms.

The Deployment Pattern That's Emerging

Smart organizations aren't choosing between small and large models. They're building tiered architectures.

The pattern looks like this: SLMs handle the high-volume, low-complexity work at the edge. When a task exceeds the small model's confidence threshold, it escalates to a larger model in the cloud. The result is that 85 to 90 percent of requests never leave the device, and the expensive frontier model only handles the genuinely hard cases.

This isn't hypothetical architecture. It's how several Fortune 500 companies are structuring their AI infrastructure right now. The edge handles volume. The cloud handles complexity. Cost drops. Latency drops. Privacy improves. Everybody wins.

What This Means If You're Planning AI Deployment

If your organization is evaluating AI deployment in 2026, here's the practical takeaway: start with the workload, not the model.

Map your actual AI use cases. For each one, ask: does this need frontier-level reasoning, or does it need reliable classification, extraction, or summarization? If it's the latter, and it usually is, a small model running on your own infrastructure is likely the better path.

The tooling has matured. Quantization techniques like GGUF and AWQ make it straightforward to compress models for edge hardware. Frameworks like llama.cpp and ONNX Runtime handle inference on everything from phones to industrial controllers. Fine-tuning pipelines are well-documented and reproducible.

The SLM wave isn't coming. It's here. The organizations deploying AI at scale in 2026 aren't the ones with the biggest cloud budgets. They're the ones that figured out most AI work doesn't need the cloud at all.

Marco Kotrotsos writes about practical AI implementation at gloss.run and acdigest.substack.com.

Audit your AI use cases and separate “frontier-required” reasoning from high-volume tasks like extraction, classification, and summarization—those are prime candidates for small, fine-tuned models. If you operate in regulated or latency-sensitive environments, start piloting on-device or on-prem deployments to reduce compliance friction and unlock real-time workflows. When budgeting, model total cost of ownership (including per-call API spend) against a one-time edge hardware rollout, and watch the fast-growing SLM ecosystem (Phi, Gemma, Llama, Mistral) for models that match your constraints.