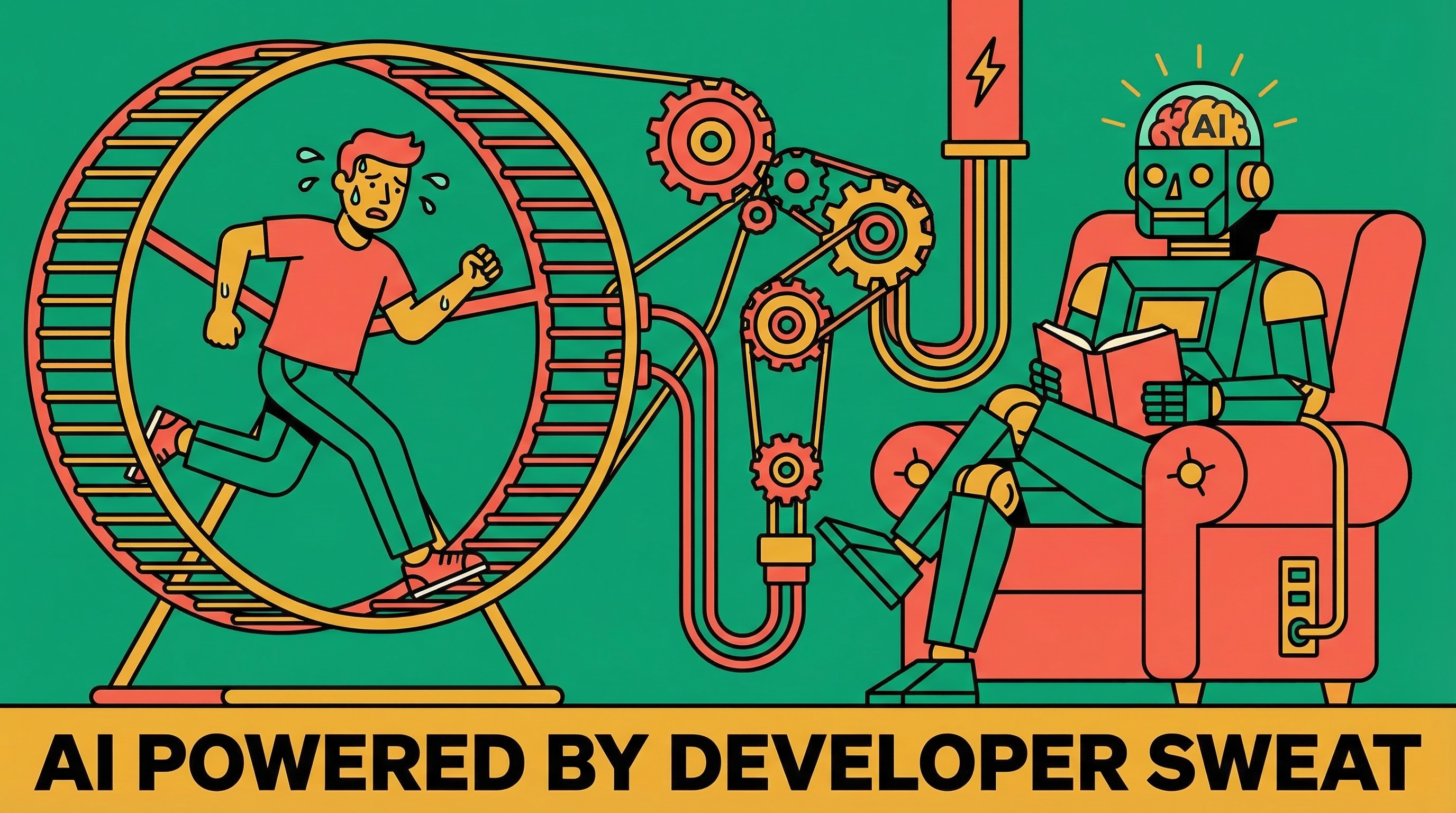

- AI coding assistants reduce friction on individual tasks, but organizations often convert time saved into more work rather than less burnout.

- Research observed AI-driven work intensification through task expansion, blurred work-life boundaries, and accelerated multitasking.

- As AI makes it easy to take on adjacent responsibilities, capability without clear boundaries turns into unrecognized scope creep.

- Because AI interactions feel lightweight and always available, work leaks into breaks and personal time, reducing real recovery.

- Early AI power users are burning out first as higher output resets expectations upward, creating a self-reinforcing cycle of more work and less downtime.

A year ago I argued that AI coding assistants would reduce developer burnout. The tools would handle the mechanical parts, the boilerplate, the test scaffolding, the tedious file-by-file refactoring, and developers would spend more time on the work that actually matters. Less friction, less cognitive grind, less burnout.

I was half right. The tools did reduce friction on individual tasks. What I missed is that organizations treat every minute saved as a minute available for more work. The result is not less burnout. It is a different kind of burnout, and it is hitting the people who embraced AI the hardest.

The research caught up

Harvard Business Review published findings from an eight-month embedded study at a 200-person technology company. Researchers spent two days a week on-site, tracked internal communications, and interviewed over 40 people across engineering, product, design, and operations.

Their conclusion: AI does not reduce work. It intensifies it.

Three patterns emerged.

First, task expansion. Employees started doing work outside their traditional roles. Product managers wrote code. Researchers handled engineering tasks. Everyone described it as casually "just trying things" with AI, but those experiments accumulated into significantly broader responsibilities that nobody formally assigned or acknowledged. When your tool can scaffold a feature in ten minutes, the temptation to take on adjacent work is real. You are not being irresponsible. You are being capable. But capability without boundaries becomes a trap.

One engineering lead in the study ended up informally supervising colleagues' AI-assisted work on top of their own tasks. Nobody asked them to. It just happened because they were the person who knew how the tools worked.

Second, blurred work-life boundaries. Because prompting an AI feels conversational rather than like "real work," it leaks into time that should be recovery. Lunch breaks, evenings, mornings before the workday starts. Asking Claude a question while waiting for coffee does not feel like working. But it is. The cognitive load accumulates even when the activity feels light.

The researchers found that downtime stopped providing actual recovery value. Employees were technically "off" but mentally still engaged with work problems through their AI tools. The always-available nature of the interface erased the friction that used to separate work time from personal time.

Third, accelerated multitasking. Workers managed multiple AI threads simultaneously, treating the model as a partner that enabled momentum on several fronts at once. In practice this meant constant attention-switching, frequent output-checking, and growing task backlogs. The feeling of productivity went up. The actual cognitive overhead went up faster.

The power users burn out first

The same week, TechCrunch reported something that lines up perfectly: the earliest, most enthusiastic AI adopters are showing burnout signs first. Not the skeptics. Not the people dragging their feet. The power users who built workflows and automated everything they could.

The mechanism is straightforward. You complete tasks faster with AI. Your manager notices the increased output. Expectations calibrate upward. More work gets assigned. You rely on AI more to keep up. Your scope broadens. The density of your work increases. You are now doing more, faster, with less recovery time, and the baseline expectation is that this pace is normal.

Nobody designed this cycle. It emerged because nobody designed against it.

Old burnout versus new burnout

The WHO defines burnout as "chronic workplace stress that has not been successfully managed." The traditional developer version looked like this: holding complex systems in your head, debugging code you did not write, the constant context-switching between thinking and typing. AI tools genuinely reduce those specific pressures. I still believe that. Using Claude Code, I spend less time on the mechanical parts of coding and more time on the architectural decisions that actually matter.

But the new burnout is about volume, not difficulty. The cognitive load of any individual task went down, but the total load went up because the number of tasks increased, the scope of what you handle expanded, and the boundaries between "working" and "not working" dissolved.

The people most at risk are the ones doing everything "right." They learned the tools. They built efficient workflows. They became more productive. And then that productivity became the new baseline.

One engineer in the HBR study said it plainly: "You don't work less. You just work the same amount or even more."

This is not an argument against the tools. I use them every day. They are genuinely better for the work itself. But the tools exist inside organizations, and organizations have a reliable pattern of converting efficiency gains into output expectations rather than time savings.

This pattern is not new

Every productivity revolution does this. The washing machine did not give people more leisure time. It raised the standard for how clean clothes should be. The spreadsheet did not give accountants shorter weeks. It raised the expectation for how much analysis should be done.

AI coding assistants are following the same path. The efficiency gains get absorbed into higher output expectations rather than better working conditions. Unless someone deliberately breaks the pattern.

What actually helps

At the organizational level, the HBR researchers proposed "AI Practice," a set of norms designed to prevent the intensification cycle. The ones that stood out: intentional pauses before green-lighting AI-accelerated work (does this task actually need to happen right now?), sequencing instead of constant responsiveness (batch notifications, protect focus windows), and deliberate human grounding time that interrupts the solo AI work loop.

At the individual level, the adjustments are more immediate.

Stop treating AI as always-on. Close the terminal when you are done for the day. The conversational interface makes it feel casual, but your brain does not distinguish between casual prompting and focused work. Both consume cognitive resources.

Track your actual scope over time. Are you doing the same job faster, or are you doing a bigger job at the same speed? If your responsibilities have quietly expanded since you started using AI tools, that is worth noticing and naming.

Set explicit boundaries for when you use AI and when you do not. Some tasks benefit from the slower, more deliberate thinking that happens without a tool offering instant answers. Not everything needs to be optimized.

Pay attention to the quality of your downtime. If your "breaks" involve scrolling through AI-generated outputs or iterating on a side project with Claude, that is not rest. That is a different flavor of work.

The tools are not the problem

The HBR researchers ended with a line worth keeping: "Without intention, AI makes it easier to do more, but harder to stop."

The tools are extraordinary. The pace they enable is real. The risks of not managing that pace are also real. These two facts coexist, and pretending one negates the other is how you end up as the most efficient person on the team who cannot think straight by Thursday afternoon.

AI did not create the organizational tendency to convert efficiency into output expectations. That pattern is decades old. But AI accelerated it in a way that is particularly hard to notice because the work feels lighter even as it compounds.

The people building with AI every day, and I count myself among them, need to be honest about this. The productivity gains are real. The burnout risk is also real. The absence of intention is the problem. Not the tools.

Treat AI speed as something to budget, not something to spend automatically. Set explicit boundaries on what you will and won’t take on, and make scope changes visible so “extra” work doesn’t become the new baseline. Protect recovery time by keeping AI out of breaks and evenings, and watch for multitasking creep—fewer concurrent threads with deliberate check-in points will usually feel slower but reduce cognitive load and burnout risk.