- AI adoption is widespread (88% of companies), but far fewer (39%) see meaningful bottom-line impact, revealing a large adoption-to-results gap.

- Many organizations fall into “AI theater,” adding AI tools on top of unchanged workflows, which produces activity without measurable ROI.

- The era of open-ended pilots is ending; by 2026 the competitive advantage shifts from experimentation to execution and operational integration.

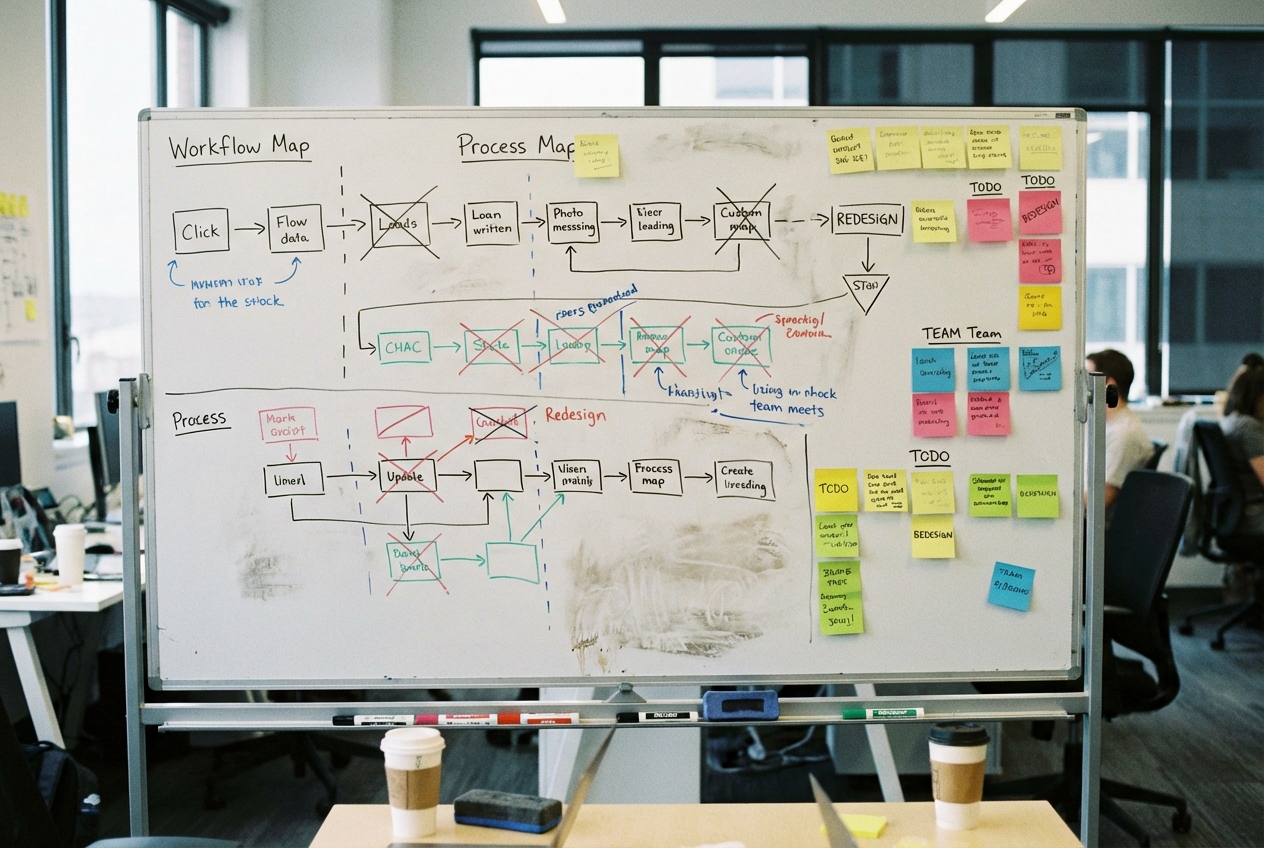

- Real value is created in the integration layer—redesigning end-to-end processes so AI handles triage, routing, pre-qualification, and drafting within the workflow.

- Companies that benefit treat AI as infrastructure for teams and workflow orchestration, not just a tool individual employees use at one step of a longer process.

88% of Companies Use AI. Only 39% Have Anything to Show for It.

The numbers tell a story nobody in enterprise AI wants to hear. According to recent surveys, 88% of companies report using AI in at least one business function. That sounds like a revolution. Then you look at the impact data: only 39% see a significant effect on their bottom line.

That's a 49-point gap between adoption and results. Almost half the market is running AI tools that aren't moving the needle.

AI theater

Most companies aren't failing at AI because they picked the wrong model or hired the wrong team. They're failing because they bought tools and layered them on top of existing workflows without changing anything.

A marketing team gets access to a writing assistant but still runs the same approval chain with the same headcount and the same turnaround time. A finance department plugs in anomaly detection but still relies on monthly reporting cadence. The tool is new. Everything around it is the same.

The pattern is consistent. A company announces an "AI initiative." They procure licenses. Individual employees start experimenting. Some find it useful, most don't change their habits. Six months later, leadership asks where the ROI is. Nobody has a clear answer. The tools are there. The integration isn't.

Experimentation is over

For the past three years, experimenting was the right move. Nobody knew which capabilities would matter, which vendors would survive, or how to measure value. Trying things made sense.

That window closed. Both MIT Sloan and IBM identify 2026 as the year AI shifts from experimentation to execution. The technology has matured enough that the bottleneck isn't "can it do this?" anymore. It's "have we wired it into the way we actually work?"

Three years of pilots without operational integration is just expensive tourism. You visited AI. You didn't move there.

Where value gets created

The gap between adoption and impact has a specific location: the integration layer. The boring, unglamorous work of connecting AI capabilities to actual business processes. Not the model. Not the prompt. The plumbing.

This means restructuring a customer service workflow so AI handles triage and routing before a human ever sees the ticket. Rebuilding procurement so AI pre-qualifies vendors against compliance criteria automatically. Redesigning content production so AI generates first drafts, humans add expertise, and the review cycle drops from two weeks to three days.

None of this makes for a good keynote. All of it is where the 39% that see real impact are spending their time.

The pattern among companies that get results: they don't treat AI as a tool that individuals use. They treat it as infrastructure that teams operate on. The shift from individual AI use to team and workflow orchestration is the single biggest differentiator between companies that adopted AI and companies that benefited from it.

The workflow problem

Most knowledge work follows a chain. Someone receives a request. They gather information from multiple systems. They apply judgment. They create output. They send it for approval. They revise. They deliver.

Where do companies typically insert AI? At the "create output" step. One step in a seven-step chain. Even if AI makes that step 10x faster, the overall process might only improve by 15% because everything around it stays slow.

Companies closing the adoption-impact gap redesign the entire chain. They ask which steps can be eliminated, which can be automated, which need human judgment that AI can augment. This is process work, not technology work. Most organizations are bad at it because they've spent two decades bolting new tools onto old processes and calling it transformation.

The hiring paradox

One of the more counterintuitive findings comes from EY-Parthenon: companies using AI most intensively grow their headcount faster than companies using it lightly or not at all.

What's happening is that companies finding real value use the productivity gains to do more, not to do the same with less. They enter new markets, launch new products, handle more customers. The integration work creates capacity, and ambitious companies fill that capacity with growth.

This reframes the investment case. The question isn't "how many people can we replace?" It's "what can we do now that we couldn't before?" Companies stuck in AI theater are still asking the first question. Companies seeing results moved past it a while ago.

What integration looks like, concretely

A mid-size insurance company processes claims. Before AI, an adjuster receives a claim, manually pulls policy details, reviews documentation, cross-references fraud patterns, makes a determination, writes it up. Average time: 4 hours per claim.

AI theater version: give the adjuster a chatbot that helps write the determination letter faster. Saves maybe 20 minutes. Nice, not transformative.

Integration version: AI ingests the claim at submission, pulls policy details automatically, flags documentation gaps back to the claimant in real time, runs fraud pattern analysis before the adjuster sees it, and presents a pre-scored case file with a draft determination. The adjuster's job shifts from processing to reviewing and deciding. Time drops to 45 minutes, and accuracy improves because the adjuster focuses on judgment instead of data gathering.

Same AI. Radically different implementation. The difference is entirely integration work that required redesigning the claims workflow, not just buying a tool.

The boring work wins

If your company is in the 88% that adopted AI but not the 39% seeing results, the diagnosis is almost certainly not about technology. You probably have capable tools. You might have talented people using them.

The gap is in the middle. Between the tool and the outcome there's a workflow that hasn't been redesigned. An approval chain that predates the tool by a decade. Data sitting in a system the AI can't access because nobody built the connector.

This isn't work that gets announced at all-hands meetings. It happens in process mapping sessions and integration sprints and uncomfortable conversations about changing how teams operate. It requires organizational will more than technical skill.

The 49-point gap will define the competitive landscape for the next several years. Some companies will close it through disciplined integration work. Others will keep adding AI tools to unchanged workflows and wondering why the results don't match the demo.

The technology was never the hard part.

Marco Kotrotsos writes about practical AI implementation at gloss.run and acdigest.substack.com.

If you want AI to pay off, stop measuring success by licenses, pilots, or isolated productivity gains and start redesigning the full workflow around where time actually gets stuck. Map the end-to-end process, then decide which steps to eliminate, automate, or augment with human judgment—and wire AI into the handoffs, routing, and approvals, not just the “create output” step. Look for ROI in cycle-time reduction, fewer escalations, and higher throughput per team, and treat integration work as the main project rather than an afterthought.