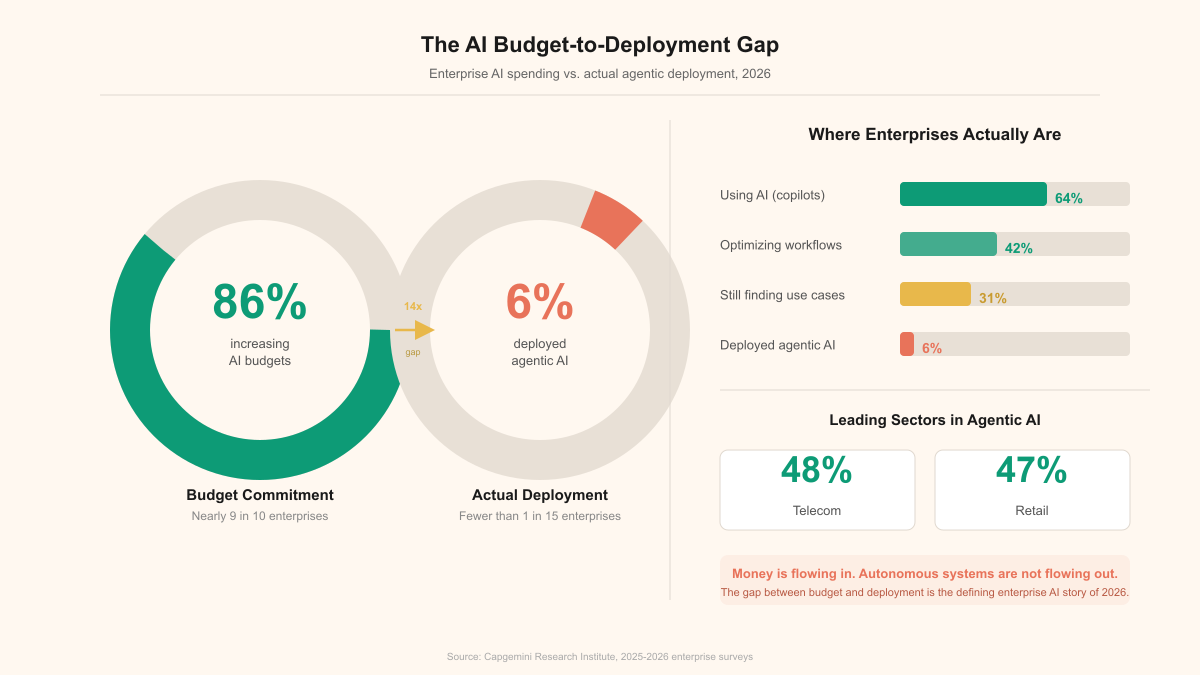

- Only 6% of organizations have fully implemented agentic AI even as 86% plan to increase AI budgets in 2026, signaling a major execution gap.

- The widely cited 64% “we’re using AI” figure is largely driven by copilots (assistive features) rather than autonomous agents that execute end-to-end workflows.

- Most AI spend is going toward optimizing existing AI workflows (42%) and searching for additional use cases (31%), not building and operationalizing agentic systems.

- Telecom (48%) and retail/CPG (47%) lead agentic adoption because high transaction volumes and standardized processes create clear ROI and fast feedback loops.

- Regulated, high-stakes sectors like financial services and healthcare lag despite volume because compliance, liability, and process variability make autonomy harder to deploy safely.

There's a number making the rounds in enterprise AI circles that should stop every executive mid-sentence. According to IDC, only 6% of organizations have fully implemented agentic AI. Six percent. And yet 86% of respondents say their AI budgets are going up in 2026. The math on that is brutal: the vast majority of companies pouring money into AI are buying capability they can't operationalize. They're funding demos, sponsoring pilots, and staffing up innovation labs that produce slide decks instead of production systems.

The gap between AI spending and AI deployment isn't new. But the sheer scale of it, in a market that's been talking about agentic AI for over a year now, that's worth paying attention to.

The 64% illusion

Ask enterprises whether they're using AI, and 64% will say yes. Actively using it. Tools deployed, licenses paid, internal comms sent. On paper, the AI transformation is well underway.

Dig one layer deeper and the picture falls apart. That 64% is overwhelmingly copilot usage. Autocomplete in the IDE. Summarization in the email client. A chatbot on the intranet that mostly answers HR questions. Useful tools, sure. But they're not agentic AI. They don't take action, don't make decisions across workflows, don't operate with any real autonomy.

IDC predicts that AI copilots will be embedded in 80% of enterprise workplace applications. Plausible. Copilots are easy. They sit inside tools people already use. They suggest, they summarize, they assist. They don't break things, because they don't actually do things. The user stays in the loop for every action.

Agentic AI is a different animal. An agent receives a goal, breaks it into tasks, executes those tasks across systems, handles exceptions, and delivers a result. The distance between a copilot and an agent is roughly the distance between a spellchecker and a junior employee. And 94% of organizations haven't crossed it.

Where the money is actually going

The spending priorities tell you exactly where companies are stuck.

| Spending priority | % of respondents |

|---|---|

| Optimizing existing AI workflows | 42% |

| Finding additional use cases | 31% |

| Infrastructure and platform investment | ~15% |

| Workforce training and readiness | ~12% |

42% are optimizing what they already have. 31% are still looking for places to apply AI. That's 73% of respondents either polishing existing copilot setups or hunting for new ones. Not deploying agents. Not building autonomous workflows. Just optimizing and exploring.

42% are optimizing what they already have. 31% are still looking for places to apply AI. That's 73% of respondents either polishing existing copilot setups or hunting for new ones. Not deploying agents. Not building autonomous workflows. Just optimizing and exploring.

I've seen this pattern before. Every enterprise tech cycle runs the same playbook: spend on tools first, figure out what to do with them second. The cloud migration era looked exactly like this. Companies bought AWS contracts years before they figured out how to run workloads on them efficiently. AI is following the same trajectory, just faster and more expensive.

The sectors that actually moved

Not every industry is stuck at 6%. Two sectors have pulled noticeably ahead, and the reasons tell you a lot about what makes agentic AI actually work in practice.

| Industry | Agentic AI adoption |

|---|---|

| Telecom | 48% |

| Retail / CPG | 47% |

| Financial services | ~25% |

| Healthcare | ~18% |

| Manufacturing | ~15% |

| Average across all sectors | 6% (fully deployed) |

Telecom and retail lead because they've got two things most other industries lack: massive transaction volumes that make the ROI for automation obvious, and relatively standardized processes that agents can follow without constant human judgment. A telecom company routing customer service inquiries through an agentic system can measure cost savings per ticket within weeks. A retailer using agents for inventory optimization sees results in the next quarterly report. Clean feedback loops.

Healthcare and financial services have the transaction volume but not the standardization. Regulatory complexity, liability concerns, and the sheer stakes of getting it wrong create friction that slows deployment no matter how good the technology is. The tech isn't the bottleneck. The context is.

The four walls keeping the 94% stuck

These show up in every survey, every analyst report, and every honest conversation with enterprise AI teams. They're stubbornly consistent.

Data quality and integration. Agents need to operate across systems. Clean, accessible, well-structured data from multiple sources. And most enterprises? They've got data spread across dozens of systems with inconsistent formats, incomplete records, and no unified access layer. You can't build an agent that processes insurance claims if the claims data lives in three different systems with three different schemas and no reliable way to reconcile them.

Governance and compliance. When a copilot suggests a bad email subject line, nothing happens. When an agent executes a bad trade, processes an incorrect refund, or sends protected health information to the wrong recipient, you've got legal and regulatory consequences. 83% of AI leaders express major or extreme concern about generative AI risks. That's not irrational. It reflects the reality that most organizations don't have the governance frameworks to let agents operate safely.

Infrastructure gaps. Agentic AI requires orchestration layers, monitoring systems, fallback mechanisms, and integration infrastructure that most enterprises just don't have. A copilot runs inside an existing application. An agent needs its own operational environment, with logging, guardrails, human escalation paths, and audit trails. Completely different operational surface area.

Workforce readiness. Someone has to design, deploy, monitor, and improve these agents. And that someone needs to understand both the AI capabilities and the business process well enough to build agents that actually work. Most organizations don't have this talent. They've got AI enthusiasts who understand the technology and business analysts who understand the processes, but not people who bridge both worlds. (I see this constantly in my own work with companies trying to make this leap.)

The pilot trap

The most dangerous place in the enterprise AI journey right now is the successful pilot.

A team spins up an agent in a sandbox, feeds it clean data, gives it a well-scoped task, and watches it perform beautifully. Leadership sees the results, increases the budget, and asks to scale it across the organization.

Then scaling fails. Production data is messier than pilot data. Edge cases are more varied. Integration points are more fragile. The team that babied the pilot agent through its daily work can't provide that same attention to twenty agents across five departments. The pilot success becomes a deployment failure, and the 6% stays at 6%.

This isn't a technology problem. The models are capable. GPT-4, Claude, Gemini, they can reason, plan, and execute multi-step workflows. The problem is organizational. Companies are buying the ingredients without having the kitchen, the recipe, or the chef.

The honest path forward

There's a version of the next twelve months where the 6% moves to 12% or 15%. It won't be because of better models or bigger budgets. It'll be because a small number of companies do the unglamorous work that the other 94% are skipping.

They'll pick one workflow, not ten. They'll fix the data pipeline for that one workflow until it's clean and reliable. They'll build governance frameworks specific to what that agent does, not abstract AI policies that cover everything and govern nothing. They'll hire or train people who understand both the AI and the business process. And they'll measure success not by whether the agent works in a demo, but by whether it works on the worst data, on the busiest day, with the least experienced person monitoring it.

The 86% increasing their budgets aren't wrong to spend. AI is a real capability shift. But there's a meaningful difference between spending on AI and deploying AI. Right now, the enterprise market is very good at the first and very bad at the second. The 6% who figured out deployment aren't smarter or better funded. They just stopped treating pilots as progress and started treating production as the only metric that counts.

Treat “AI adoption” claims in your organization as a maturity question: distinguish copilot usage from agents that can take actions across systems with measurable outcomes. If you’re investing more, prioritize a small number of high-volume, standardized workflows where ROI and error detection are clear, then build the operational foundations (governance, integration, monitoring, and human-in-the-loop controls) needed to scale. Watch your budget mix—if most dollars are going to pilots and “use-case hunting,” you’re likely funding demos rather than production-grade deployment.