- Early LLM frameworks like LangChain solved real gaps in 2023, but modern model APIs now provide native tool use, structured output, and conversation management.

- Framework abstractions often add complexity without adding capability, turning simple agent logic into hard-to-debug plumbing.

- Many effective production agents are built as a simple loop that alternates between LLM calls and tool execution, not graphs or workflow engines.

- Using a framework creates a “framework tax”: extra dependencies, lag behind new API features, and ongoing maintenance from breaking changes and wrapper limitations.

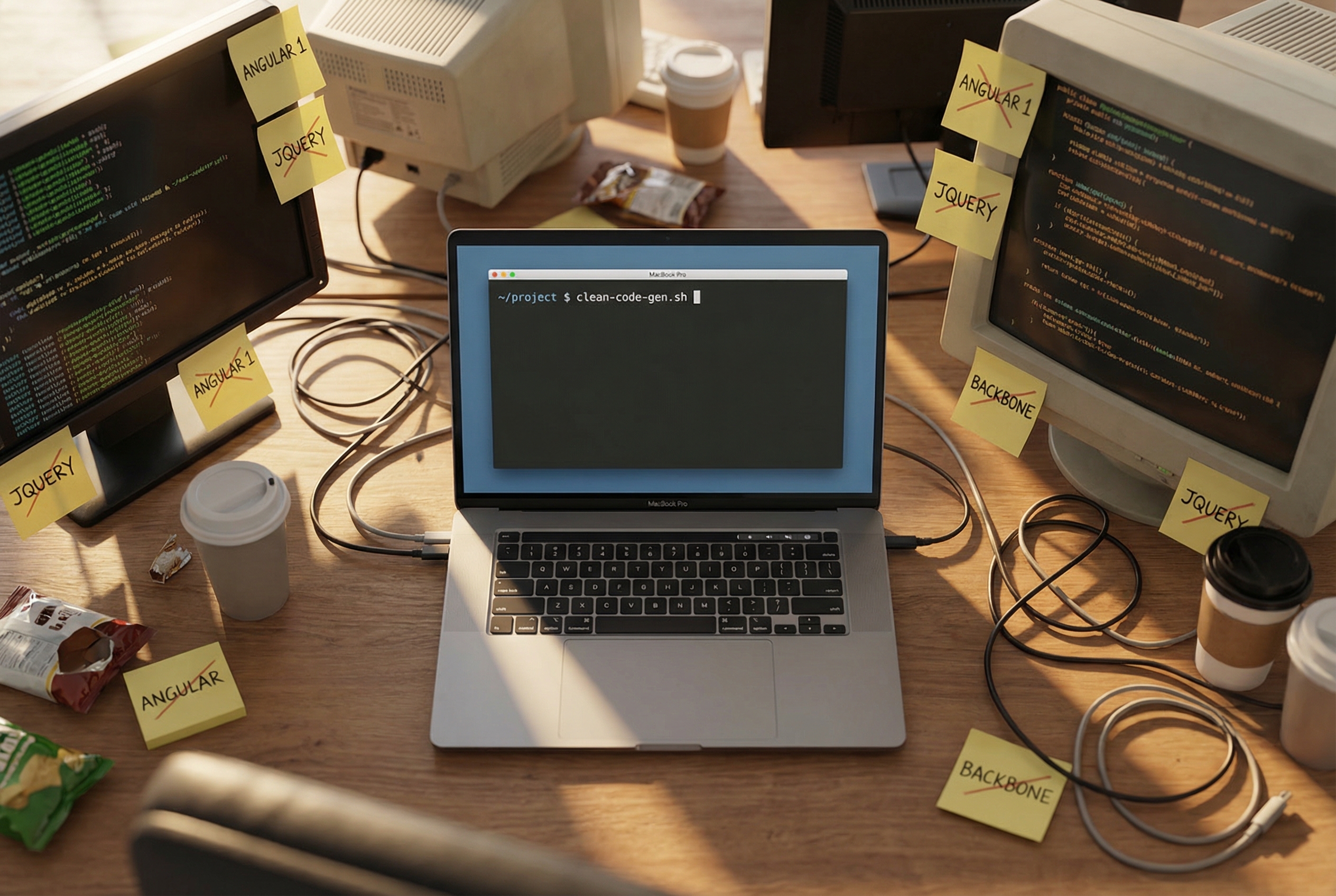

I spent a Saturday afternoon last month ripping LangChain out of a client's production agent. The agent was supposed to summarize support tickets and route them. Simple job. But somewhere between the ConversationChain, the OutputParser, the RetrieverQA chain, and a custom CallbackHandler, a straightforward API call had turned into 400 lines of framework plumbing. When it broke, the stack trace went through files nobody on the team had ever opened.

Replacing it with direct Claude API calls took about three hours. The new version was 60 lines of Python. It was faster, cheaper on tokens, and when something went wrong, the error pointed to code the team actually wrote.

This is happening everywhere right now. The frameworks, wrappers, and abstractions people built twelve months ago are already getting in the way. The models got good enough that the middleware became the bottleneck.

The abstraction that stopped abstracting

Frameworks exist to solve problems. LangChain solved a real one: in early 2023, model APIs were inconsistent, tool calling wasn't native, and you genuinely needed middleware to glue things together. If you wanted structured output from GPT-3.5, you had to parse it yourself. If you wanted an agent that could use tools, you needed orchestration code that the APIs didn't provide.

That was eighteen months ago. Today, Claude supports native tool use, structured JSON output, and multi-turn conversation management out of the box. OpenAI's API does the same. Google's does too. The problems LangChain was built to solve have been absorbed into the APIs themselves.

What's left is an abstraction layer with nothing underneath it to abstract. You're importing ChatOpenAI instead of calling the OpenAI SDK directly. You're wrapping your prompts in PromptTemplate objects that add complexity without adding capability. You're debugging BaseRetriever subclass hierarchies when your RAG pipeline is slow, instead of just looking at the API call.

The abstraction isn't helping. It's standing in the way.

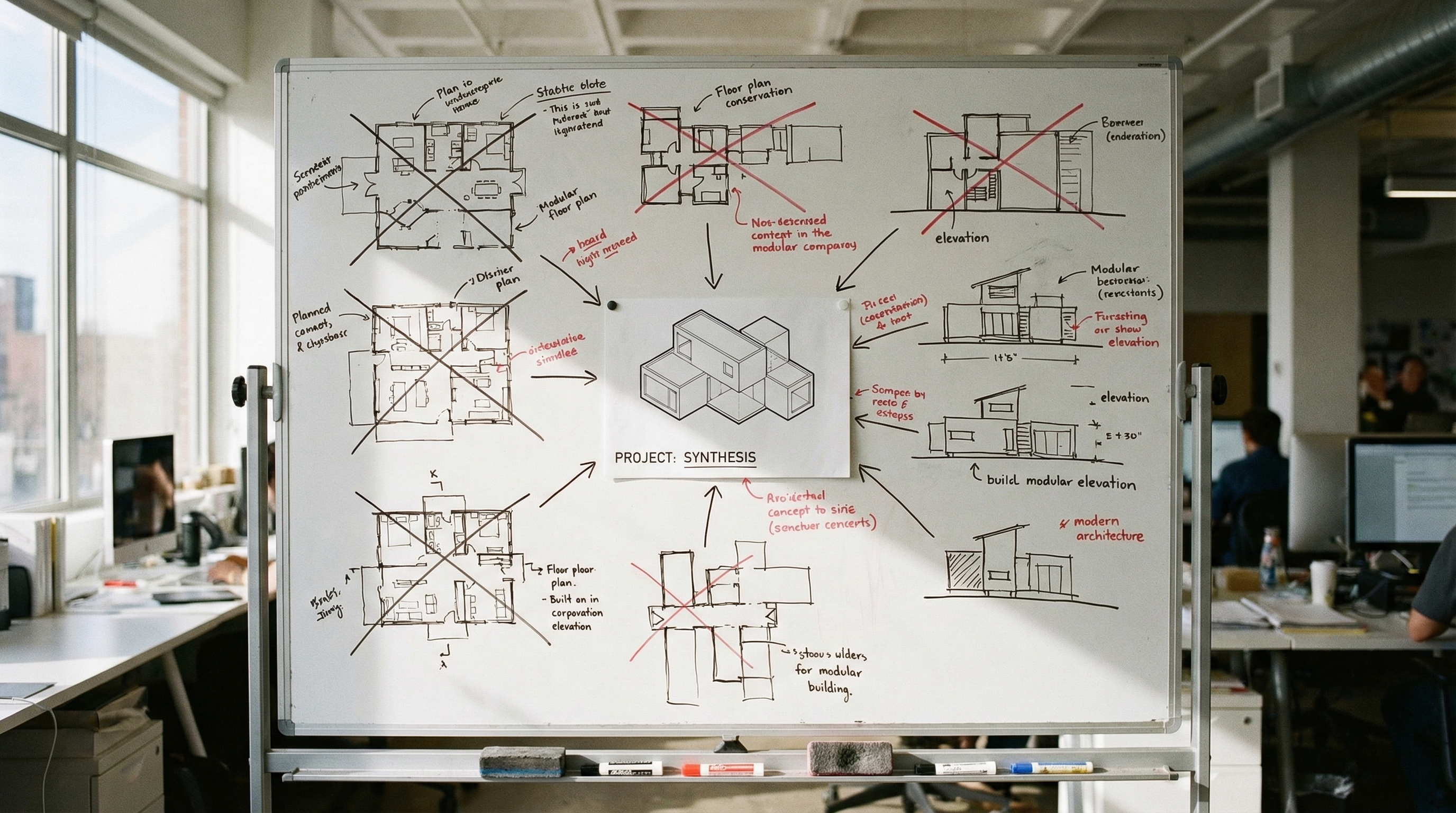

What the best agents actually look like

Here's the architecture of nearly every production-grade AI agent that actually works well:

while True:

response = model.call(messages, tools)

if response.wants_tool_call:

result = execute_tool(response.tool_call)

messages.append(result)

else:

break

A model. Some tools. A loop. That's the entire thing.

Claude Code runs on this pattern. So does Devin. So do OpenAI's own agent products. None of them use LangChain. None of them use CrewAI. None of them use AutoGen. The companies closest to the models, the ones who understand LLM capabilities better than anyone, looked at the framework ecosystem and said no thanks.

Anthropic's own agent documentation describes this as the recommended architecture. A senior engineer on the team wrote that "using simple agentic loops, while-loops wrapping alternating LLM API and tool calls, is an effective technique for building AI agents." Not a graph. Not a state machine. Not a workflow engine. A while loop.

The framework tax is real

There's a cost to frameworks that doesn't show up in any architecture diagram.

When you build directly on a model API, you have one dependency. When that API adds a new feature, you use it immediately. When you use a framework, you have two dependencies: the model API and the framework's wrapper around it. When the API adds streaming tool calls, you wait for LangChain to support it. When Claude ships extended thinking, you wait for your framework to expose it. You're always one release behind the capabilities you're paying for.

LangChain became notorious for this. Breaking changes between releases. API wrappers that lagged behind the actual APIs. Developers reported spending more time keeping their framework integration working than building their actual product. An arXiv study found that 12.35% of all self-admitted technical debt in LLM projects was related to LangChain usage specifically.

Then there's the token cost. Framework-generated prompts are verbose. System messages you didn't write, formatting you didn't choose, context stuffing you didn't ask for. With direct API calls, every token in the prompt is one you put there on purpose. When you're paying per million tokens, that bloat is a line item on your invoice.

The CrewAI problem

CrewAI is a different flavor of the same issue. Instead of wrapping API calls, it wraps the concept of agents themselves. You define "crews" of agents with "roles" and "goals" and "backstories," and the framework orchestrates their interactions.

It sounds great in a demo. In production, you're debugging why Agent A passed malformed JSON to Agent B, and the error is somewhere inside the framework's inter-agent communication layer. You didn't write that layer. You can't easily modify it. You're at the mercy of someone else's assumptions about how agents should talk to each other.

The better approach, and the one I see working in production, is just writing the orchestration yourself. Call one model, take its output, feed it to the next call. No framework. No agent personas. No backstory strings that burn tokens without adding capability. Just code that does what you need it to do, and nothing else.

The jQuery parallel

This has happened before. In 2008, you needed jQuery because browser APIs were fragmented and painful. querySelector didn't work everywhere. AJAX was inconsistent. jQuery was genuinely necessary.

Then browsers standardized. fetch landed. The native DOM API got good. jQuery didn't become bad, it became unnecessary. The websites still using it were carrying dead weight, a dependency that added bundle size without adding capability.

Agent frameworks are in the exact same position. They emerged when model APIs were limited. Those APIs matured. The frameworks didn't step aside gracefully, because venture capital doesn't incentivize graceful exits. LangChain has raised over $260 million. CrewAI has raised significant funding. That money needs a return, which means the pitch has to keep working, even when the underlying problem has been solved.

The incentive structure you should notice

LangChain's open-source framework is free. It wraps your API calls in layers of abstraction that make your application opaque. When your agent breaks (and it will), the error is somewhere inside the framework's class hierarchy.

LangSmith, LangChain's commercial product, sells you observability and debugging for LangChain applications. The framework creates the opacity. The paid product sells you the transparency.

I'm not saying this is malicious. It's the logical outcome of venture-funded infrastructure in a fast-moving space. But you should notice the dynamic. The company selling you the complexity also sells you the solution to that complexity. With direct API calls, you don't need either product.

What stripping layers actually looks like

A team I worked with last quarter had a LangChain-based document processing pipeline. It used RecursiveCharacterTextSplitter, OpenAIEmbeddings, Chroma, RetrievalQA, and a custom OutputParser. Five framework components for what amounted to: split text, embed it, retrieve relevant chunks, ask the model a question.

We replaced it with direct API calls. Split text with a simple Python function. Call the embeddings API directly. Store vectors in a straightforward database. Call Claude with the retrieved context. Four function calls, no framework imports, no class hierarchies, no callback handlers.

The pipeline was 40% faster because we eliminated the framework overhead. Token costs dropped because we controlled exactly what went into each prompt. And when it broke, the error message pointed to a line in our code, not a file deep inside someone else's package.

When a framework still makes sense

Prototyping. If you need to prove a concept in an afternoon and you'll throw the code away, a framework's pre-built integrations save real time. LangChain is excellent for hackathons and proof-of-concept demos that will never see production traffic.

That's about it.

For anything that needs to run reliably, that needs to evolve as model capabilities change, that needs to be debugged by the team maintaining it, direct API calls win. Every time.

The shift is already happening

The best AI developers I know have all gone through the same arc. They started with frameworks because that's what the tutorials taught. They hit a wall when the framework's assumptions didn't match their use case. They spent a frustrating week fighting abstractions instead of building features. Then they stripped everything back to direct API calls and never looked back.

The models are good enough now. Claude can handle tool use, structured output, multi-turn reasoning, and complex orchestration natively. You don't need a middleware layer to coax it into doing these things. You just ask.

Your framework was the right call twelve months ago. It's technical debt today. The sooner you recognize that, the sooner you stop debugging someone else's code and start building your own product.

Audit your agent stack and identify where a framework is adding indirection without clear benefits; try rewriting one workflow using direct model SDK calls plus a simple tool-calling loop. Favor the smallest set of abstractions that you can fully understand and debug, and measure the impact on latency, token cost, and failure modes. If you keep a framework, treat it as a dependency with real upgrade and feature-lag risk, and have a plan to bypass it when the underlying API adds capabilities you need.