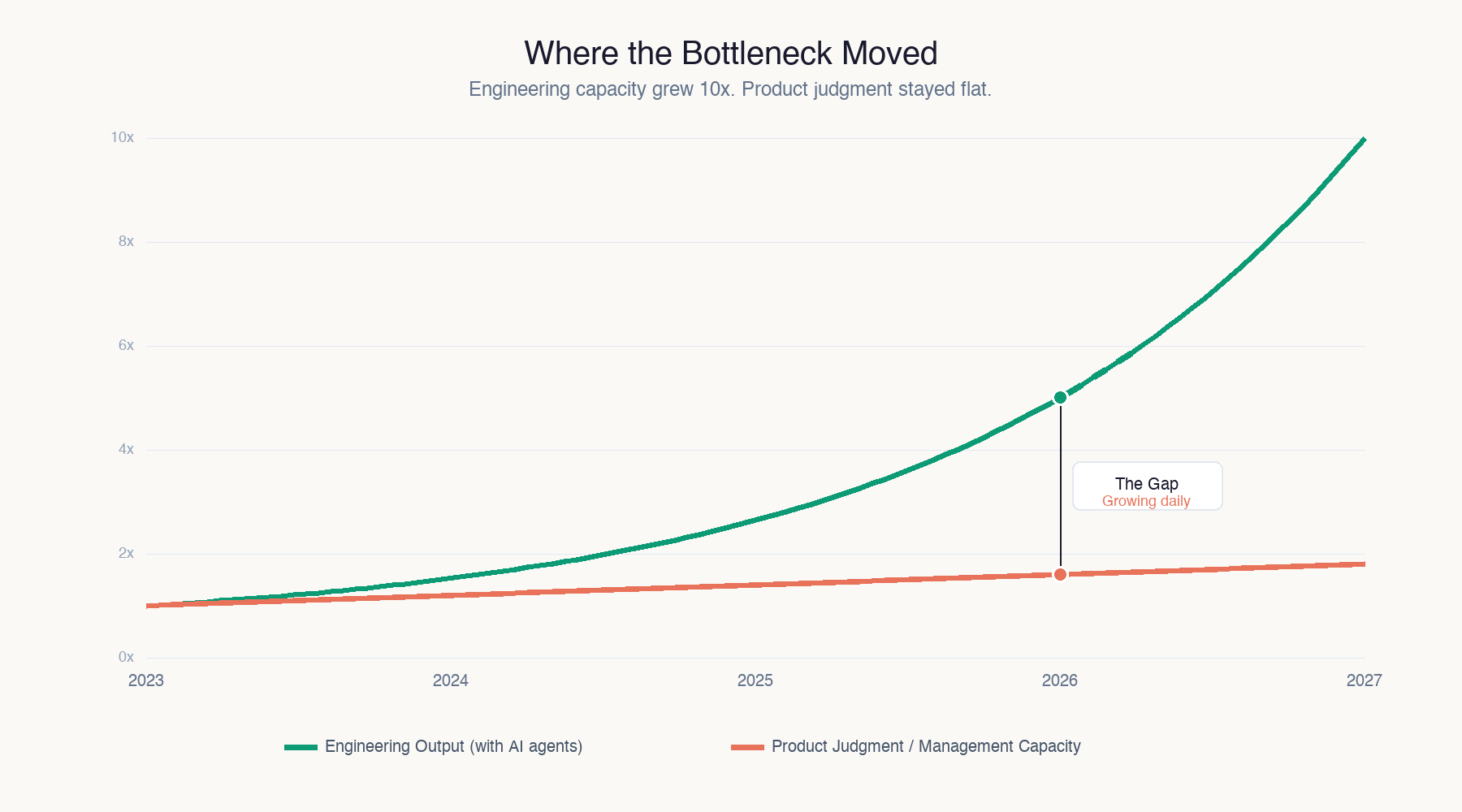

- AI has massively increased engineering output, but product judgment and prioritization have not scaled with it.

- The core constraint has shifted from “can we build it?” to “should we build it?”, and the management layer is now the bottleneck.

- When build capacity outpaces decision quality, organizations produce “busyware”: impressive volume of features that solve unclear problems and create maintenance drag.

- A gap in perceived AI time savings reflects authority: executives can choose what to skip, while non-managers still execute a pre-AI backlog at higher speed.

- Traditional PM-to-engineer ratios were designed for slower throughput, so PMs now face a multiplied curation load and must say “no” more often with higher conviction.

Engineering capacity just 10x'd. Product judgment didn't. That's the whole problem, and almost nobody is talking about it.

Every conversation about AI in organizations focuses on the build side. How fast can we ship? How many agents are developers running? Bloomberg found executives literally tracking "interactions per day" with coding agents, treating Claude Code bills like a productivity leaderboard. Databricks reports that 80% of databases on their platform are now built by AI agents. The supply side of software just exploded.

But supply was never the real constraint. The constraint was always deciding what to build, and that constraint just got worse.

The bottleneck moved and nobody noticed

For twenty years, the default answer to "why isn't this feature live?" was some version of "engineering capacity." We don't have enough developers. The sprint is full. The backlog is six months deep. Every product decision was filtered through scarcity: we can only build three things this quarter, so pick carefully.

That scarcity is evaporating. When an engineering team with AI agents can prototype in hours what used to take weeks, the backlog isn't the problem anymore. A team that previously shipped four features per quarter can now ship twelve. Maybe twenty.

The question "can we build this?" has been answered. The question "should we build this?" has not. And the people responsible for answering it, product managers, directors, VPs, the entire management layer, are still operating at pre-AI speed.

The busyware explosion

You can already see what happens when build capacity outpaces product judgment. Bloomberg identified the phenomenon and called it "busyware": features nobody asked for, dashboards built for an audience of one, half-baked demos that engineering must now maintain.

This isn't hypothetical. I'm seeing it in organizations right now. Teams are shipping more than ever. The volume of output is genuinely impressive. But when you ask "who requested this?" or "what problem does this solve?" the answers get vague. "We had capacity." "It seemed useful." "The PM thought it would be good to have."

That's what a supply-side explosion looks like when the demand side hasn't kept up. More output, same judgment. The result isn't better products. It's more products, most of which shouldn't exist.

The C-suite perception gap

A Section survey found that 40% of C-suite executives said AI saves them at least eight hours a week. Meanwhile, 67% of non-managers said it saved them fewer than two hours.

The standard reading of this gap is that executives overestimate AI's impact. I think it reveals something different. Executives control their own priorities. They decide what to delegate to an agent and what to skip. They're operating as their own product managers, making judgment calls about what's worth doing.

Non-managers don't have that authority. They receive a prioritized backlog and execute against it. The backlog was designed for pre-AI throughput. Nobody updated the prioritization layer to account for the fact that execution speed tripled.

The executives gained eight hours because they're making their own build-or-skip decisions. The individual contributors gained two hours because the management layer above them is still feeding them the same volume of pre-prioritized work, just expecting it done faster.

Product management didn't scale

Here's the structural problem. Most organizations have a ratio of product managers to engineers that was calibrated for the old world. One PM might own a backlog for a team of six engineers. That PM's job was part curator, part referee, deciding what gets built, in what order, with what tradeoffs.

When those six engineers become three times as productive, the PM's curation load triples. They need to evaluate more ideas, make more prioritization calls, say "no" more often, and do it with higher conviction because the cost of building the wrong thing didn't decrease. It just got faster.

But nobody tripled the PM headcount. Nobody restructured the decision-making process. Nobody gave the management layer new tools for evaluating what's worth building at higher throughput. The engineering side got AI agents. The product side got the same whiteboard and the same quarterly planning cycle.

The evaluation deficit

The real skill gap in 2026 isn't technical. Any team with access to Claude Code or Cursor can build fast. The scarce capability is evaluation: looking at a prototype, a feature request, a market signal, and making a fast, correct call about whether it deserves engineering time.

That capability was always rare. Product judgment, real product judgment, not just saying yes to whatever the loudest stakeholder wants, has always been the hardest skill in technology organizations. But when building was slow, bad judgment was partially hidden by scarcity. You could only build three things, so even a mediocre prioritizer would get at least one right by accident.

When you can build thirty things, bad prioritization compounds. Every wrong call burns agent compute, creates maintenance burden, fragments user experience, and dilutes focus. The cost of bad judgment went up precisely because the cost of building went down.

What restructuring actually looks like

The organizations getting this right are making structural changes, not just adding AI tools to the existing org chart.

They're inverting the ratio. Instead of one PM for six engineers, they're moving toward higher PM density or creating dedicated evaluation roles. Someone has to look at the twenty prototypes that got built this week and decide which three become real products.

They're killing faster. The old model was to agonize over whether to start building something. The new model is to build the prototype in a day, evaluate it against real criteria, and kill it if it doesn't pass. This requires a willingness to throw away working code, which most organizations still find psychologically difficult.

They're measuring judgment, not output. When your engineering metrics show record throughput but your product metrics show flat engagement, that's a judgment problem wearing an output costume. The teams I work with that are actually improving are tracking the hit rate of new features, not just the ship rate.

They're giving the management layer AI tools too. Not for building, but for analysis. Market research, competitive intelligence, user behavior synthesis, A/B test interpretation. If you're going to ask product managers to evaluate three times as many ideas, give them tools that help them evaluate faster.

The uncomfortable truth

Most organizations are proud of how fast they're shipping. The sprint velocity metrics look incredible. The CEO's slide deck shows a 4x increase in features deployed. The engineering team is running hot.

Nobody is asking whether those features should exist.

The management layer, the product decisions, the prioritization frameworks, the roadmap discipline, all of it was built for a world where building was expensive and slow. That world is gone. And the organizations that don't restructure their decision-making to match their new build capacity will produce more software, not better software.

The bottleneck moved from the hands to the head. Engineering isn't the constraint anymore. Judgment is. And until the management layer catches up, all that AI-powered build capacity is just generating busyware at unprecedented speed.

Treat prioritization as the scarce resource, not engineering hours. Tighten your intake: require a clear user, problem, and success metric before anything gets built, and regularly kill or pause work that doesn’t earn its keep. If you’re a manager or PM, redesign your process for higher throughput (faster decisions, fewer projects in flight, stronger “no” muscle); if you’re an IC, push back on vague requests and ask for the problem statement and expected impact before you ship more busywork.