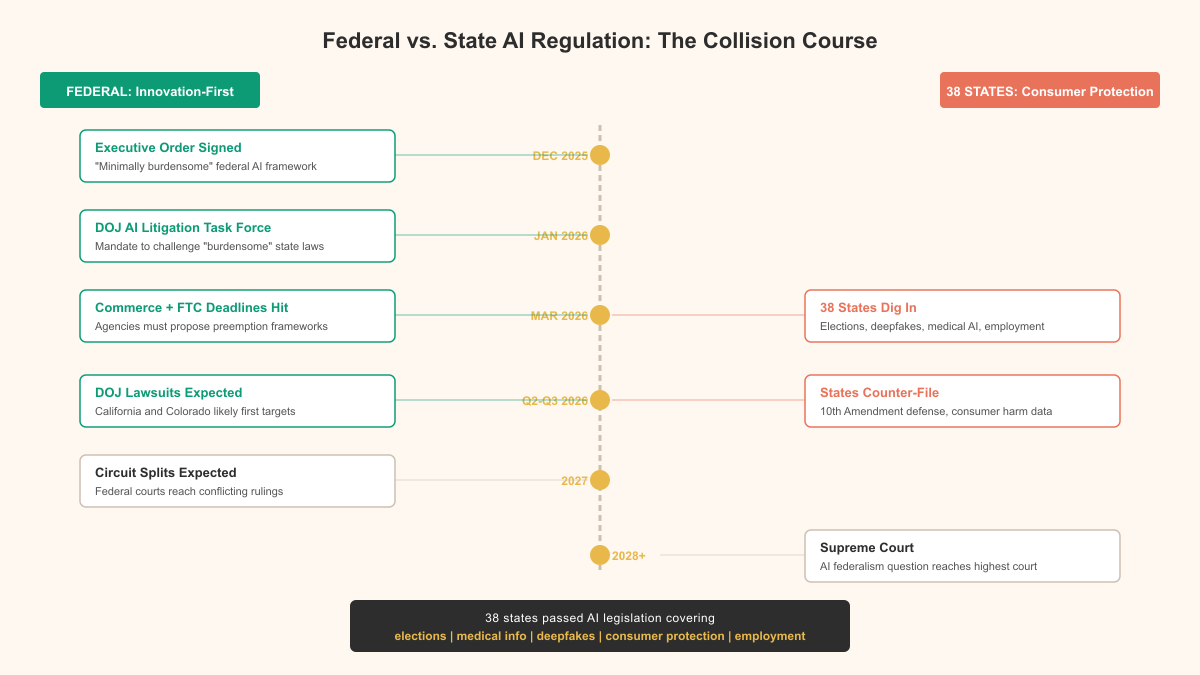

- A December 2025 executive order set a “minimally burdensome” national AI framework that effectively signals federal intent to override many state AI laws.

- The administration built a step-by-step preemption strategy: declare policy, create a DOJ AI Litigation Task Force, then use Commerce and FTC deliverables to justify targeting specific state statutes.

- Thirty-eight states have enacted AI rules in areas where federal law is largely absent, including elections, health data, deepfakes, consumer transparency, and hiring, and major states are already in active compliance phases.

- The article argues the legal basis for preemption is weak because there is no comprehensive federal AI statute and executive orders/policy statements don’t automatically invalidate state laws.

- Because policy tools alone can’t preempt state legislation, the DOJ task force appears designed to pursue preemption through federal court challenges instead.

Two deadlines hit on March 11, 2026. The Secretary of Commerce had to publish an evaluation identifying state AI laws deemed "burdensome" to innovation. The FTC had to issue a policy statement on how the FTC Act applies to AI models. Both come from the same executive order, signed by President Trump in December 2025, titled "Ensuring a National Policy Framework for AI." The stated goal: global AI dominance through a "minimally burdensome national policy framework."

The unstated goal is more blunt. The federal government is laying the groundwork to override 38 states' worth of AI legislation. Consumer protection reframed as a barrier to progress.

The collision course was built deliberately

Not an accidental policy conflict. It was engineered in stages.

December 2025: the executive order establishes federal preemption as official US AI policy. The language is careful but the intent is clear, any state law the federal government considers excessive can be flagged for removal.

January 2026: Attorney General Pam Bondi establishes the DOJ AI Litigation Task Force. A dedicated unit designed to challenge state AI laws in federal court. Not review them. Challenge them. The mandate is offensive, not analytical.

March 2026: the Commerce Department and FTC deadlines activate, producing the formal justification for going after specific state laws.

You can see the logic. First you declare a policy. Then you build the enforcement mechanism. Then you generate the evidence to justify using it. It's a litigation strategy wearing a policy development costume.

What the states actually built

The 38 states that passed AI legislation weren't winging it. Most of their laws address areas where the federal government has been conspicuously silent.

| Category | Examples | States Active |

|---|---|---|

| Election integrity | Disclosure requirements for AI-generated political ads, deepfake prohibitions in campaigns | 19 states |

| Medical information | Restrictions on AI processing of health data without consent, algorithmic bias audits in healthcare decisions | 14 states |

| Deepfakes | Criminal penalties for non-consensual AI-generated intimate images, identity protection for public figures | 22 states |

| Consumer protection | Transparency requirements for AI-driven pricing, automated decision-making disclosure | 11 states |

| Employment | Regulations on AI in hiring decisions, bias auditing requirements for automated screening tools | 9 states |

California, Texas, and Colorado are entering compliance phases for their respective AI frameworks. These aren't theoretical laws. Companies already spent real money implementing them. Compliance teams hired. Systems built. Auditing processes in place.

And the federal government is now preparing to argue that all of this was unnecessary. Or worse, counterproductive.

The preemption argument doesn't hold up

Federal preemption has a specific legal meaning. The federal government can override state law when there's a direct conflict between state and federal requirements, or when Congress has clearly expressed intent to occupy an entire regulatory field. Neither condition is met here.

There is no federal AI law. Congress hasn't passed comprehensive AI legislation. The executive order is a policy directive, not a statute. And an executive order can't preempt state legislation on its own, that requires either an act of Congress or a regulatory framework created under existing federal authority.

The Commerce Department's "burdensome" evaluation? A policy document, not a legal ruling. The FTC's policy statement on applying the FTC Act to AI models? It extends existing authority rather than creating new exclusive federal jurisdiction. Neither gives the federal government standing to invalidate state laws.

Which is exactly why the DOJ task force exists. The administration knows it can't preempt through policy alone, so it's gearing up to do it through litigation. Challenge individual state laws in federal court, argue case by case that specific provisions conflict with federal policy or impose unconstitutional burdens on interstate commerce.

It's going to be expensive, slow, and messy. Years of legal uncertainty for every company operating across state lines.

The real question is who benefits

Strip away the policy language and the legal maneuvering. Who does a "minimally burdensome" federal framework actually serve?

Not consumers. State AI laws exist because people were experiencing real harms: deepfake pornography, algorithmic discrimination in hiring, undisclosed AI-generated political content, health data processed without consent. These aren't hypothetical risks. They're documented problems that states responded to because nobody else would.

Not most businesses, either. The companies that benefit from regulatory minimalism are the largest AI developers. The ones with enough market power to operate without constraints and enough legal muscle to fight state-by-state enforcement. For mid-sized companies (and I've talked to plenty of them), regulatory uncertainty is worse than strict regulation. You can comply with a clear rule. You can't comply with a rule that might be invalidated next month.

The companies that actually asked for federal preemption are a short list. Same companies whose lobbying disclosures show eight-figure annual spending on AI policy. They want a single, permissive federal standard because it's cheaper than complying with 38 different state standards, even when those state standards exist to protect the people those companies serve.

What happens next

The litigation phase is going to follow a pretty predictable path.

| Phase | Timeline | What to expect |

|---|---|---|

| Initial challenges | Q2-Q3 2026 | DOJ task force files suits against California and Colorado AI laws, targeting disclosure and auditing requirements |

| Industry amicus briefs | Q3 2026 | Major AI companies file supporting briefs arguing state laws create compliance burdens that harm innovation |

| State coalitions | Q4 2026 | States form defensive coalitions, sharing legal resources and coordinating responses |

| Circuit splits | 2027 | Different federal circuits reach different conclusions on preemption scope, creating a patchwork of rulings |

| Supreme Court | 2028 or later | The question of AI regulatory preemption reaches the Supreme Court, likely through a California or Texas case |

Meanwhile, companies face the worst possible regulatory environment. Active state laws that might be invalidated. No federal replacement on the horizon. Ongoing litigation that could change the rules at any point. The "minimally burdensome" framework is, in practice, maximally uncertain.

The pattern we should recognize

We've seen this before. The same playbook was used against state environmental regulations, state financial regulations, and state privacy laws. The argument never changes: state-by-state compliance is too expensive, a unified federal approach would be better for everyone.

The problem is that "better for everyone" consistently means "a federal standard weaker than what the strictest states required." Federal preemption in practice doesn't raise all states to the highest standard. It pulls the most protective states down to the lowest common denominator.

If Congress were simultaneously passing comprehensive AI legislation with strong consumer protections, I'd find the preemption argument more compelling. A clear federal standard, even a strict one, would genuinely reduce compliance complexity. But that's not what's happening. The federal government is dismantling state protections without replacing them with anything equivalent.

The 38 states that passed AI laws did so because their residents needed protection and nobody in Washington was providing it. Treating that response as a problem to be litigated away, rather than a signal worth listening to, tells you everything about whose interests are actually driving this.

The states didn't pick this fight. But they're going to have to finish it.

If you operate, build, or buy AI systems, plan for a period of regulatory whiplash: state compliance obligations may remain enforceable while the federal government tries to knock them out in court. Track the Commerce “burdensome” list, the FTC’s AI policy statement, and early DOJ lawsuits, because those will signal which state requirements are most at risk and how enforcement may shift. In the meantime, avoid ripping out existing state-law compliance controls too quickly—document your risk assessments and be ready to adjust policies as litigation outcomes and federal guidance evolve.